Building the Agentic Enterprise, Part 7: The Data Foundation; Why Your Agents Are Only as Good as Your Data

Agents are only as good as the data they can access and reason over, and for most organizations, the data is not ready. In Part 7 of the Building the Agentic Enterprise series, we confront the most common and most underestimated barrier to agentic AI deployment: data readiness. Only seven percent of enterprises consider their data completely ready for AI, and data quality as a reported barrier nearly doubled over the course of 2025 as organizations moved from simple experiments to multi-agent workflows. We break data readiness into five interconnected dimensions -- quality, accessibility, architecture, knowledge management, and context management -- and explore why agents amplify data problems that human-mediated processes have long papered over. The article also covers data governance for agentic access, the real-time versus batch data freshness decision, and practical guidance for assessing where your data foundation stands today.

Building the Agentic Enterprise, Part 6: Platform Decisions: Build, Buy, Assemble, or Extend

The agentic platform landscape presents enterprise buyers with a decision more complex than the traditional build-versus-buy choice. In Part 6 of the Building the Agentic Enterprise series, we examine four platform strategies — extend what you have, buy a purpose-built platform, build your own, or assemble from best-of-breed components — and the tradeoffs each carries for speed, flexibility, and long-term positioning. We also explore why agentic AI lock-in is more severe than traditional software lock-in, compounding across model, orchestration, memory, and data layers simultaneously, and why open standards like MCP and A2A are becoming baseline requirements for vendor evaluation. The article includes a decision framework for matching platform strategy to organizational context and practical guidance on evaluating total cost of ownership, integration architecture, and planning for a market that will look very different in 18 months.

Building the Agentic Enterprise, Part 5: The Orchestration Layer; Why Coordination Is the New Competitive Edge

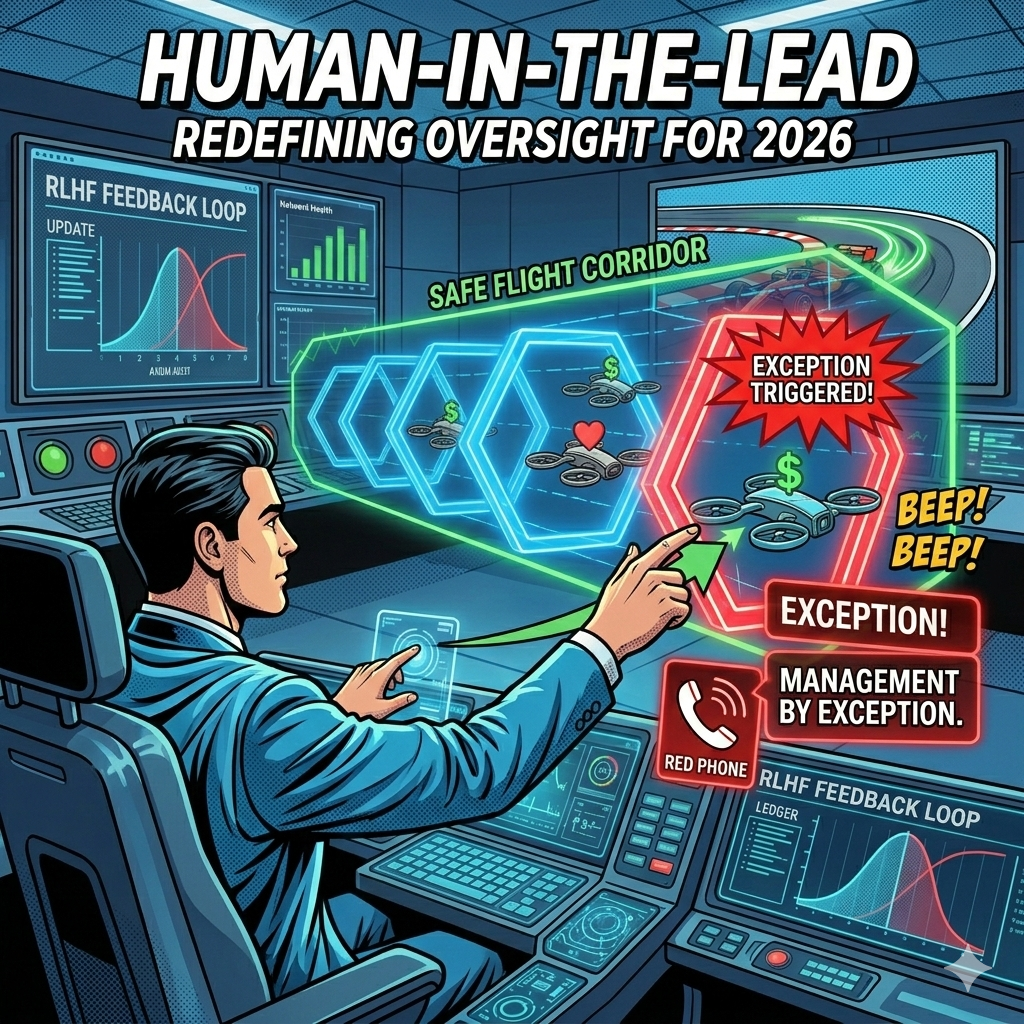

Single-agent deployments deliver value, but they hit a ceiling when work requires coordination across multiple agents, systems, and people. This article explains orchestration in business terms: the layer that decides which agent does what, in what order, with what information, and what happens when something goes wrong. It covers four orchestration patterns (sequential, parallel, hierarchical, and event-driven), draws a clear distinction between human-in-the-loop and the more effective human-in-the-lead model, and addresses the observability challenge that consumes 30 to 40 percent of implementation effort in production deployments. The article surveys the emerging infrastructure landscape, from enterprise platforms to open frameworks and interoperability standards like Google's A2A and Anthropic's MCP. The "What It Takes" section focuses on technical infrastructure readiness: API readiness, system interoperability, identity and access management at agent scale, compute costs, and shared state management.

Building the Agentic Enterprise, Part 4: Where Agents Create Real Business Value

Where should you deploy agents first? This article maps the landscape of high-value agent use cases across six business functions; finance, HR, supply chain, customer operations, sales, and IT; with real production metrics showing what organizations are achieving today. It then identifies the six characteristics that make certain workflows better candidates for agentic AI than others: high volume, rule-based with defined exceptions, data-intensive and cross-system, handoff-heavy, measurable outcomes, and a well-understood current state. The "What It Takes" section focuses on process maturity; why agents cannot automate what you have not defined, and how to build the process foundation that successful deployments require.

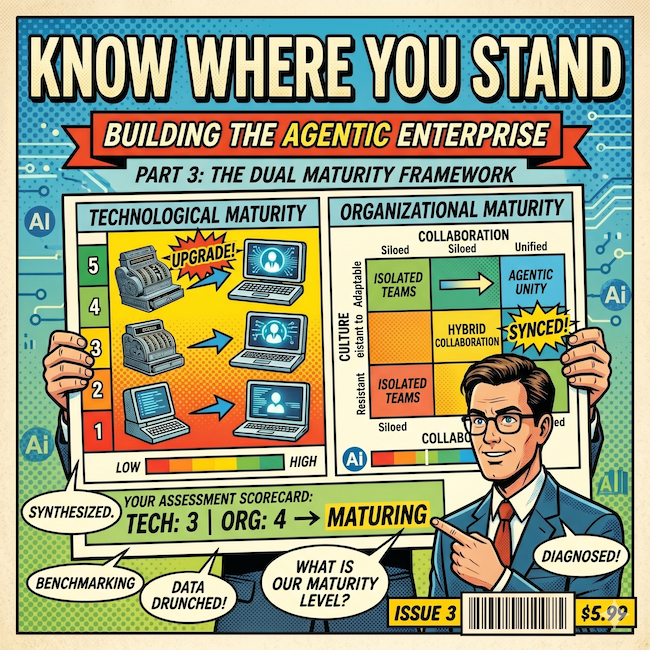

Building the Agentic Enterprise, Part 3: Know Where You Stand; The Dual Maturity Framework

Part 3 of the "Building the Agentic Enterprise" series introduces the Dual Maturity Framework, a strategic diagnostic that maps two dimensions most AI initiatives evaluate separately: how autonomous your AI is and how prepared your organization is to support that autonomy. The article defines five levels of Organizational AI Maturity (from No Capabilities to Strategic) and five levels of Agentic AI Capability (from Assistive to Full Agency), then shows how the Matching Matrix aligns them to reveal whether your organization is on track, overshooting into risk, or undershooting into lost value. With practical guidance on honest self-assessment across six readiness dimensions, this article gives leaders the framework to answer the question that matters most before investing in agentic AI: where do we stand today, and what do we need to build next?

Building the Agentic Enterprise, Part 2: Agents, Copilots, and Automation; A Business Leader's Guide

The agentic AI conversation is full of terms that everyone uses but not everyone means the same way. When your CIO, your operations lead, and your vendor's sales team each have a different mental model of what "agent" means, the result is strategic misalignment that shows up in every decision downstream. This article is a business leader's translation guide to agents, copilots, bots, RPA, orchestration, and autonomy levels, cutting through the jargon to build the shared vocabulary your organization needs before it can build shared infrastructure. It also walks through five levels of AI autonomy and offers practical guidance for spotting vendor marketing claims that don't hold up under scrutiny.

Building the Agentic Enterprise, Part 1: Why the Agentic Enterprise, Why Now

The enterprise AI conversation has shifted from 'how do we help people work faster' to 'how do we work differently.' This article explores why agentic AI marks a new inflection point for business, traces the convergence of forces making this the moment to act, and outlines the strategic readiness questions every organization should answer before moving forward. It's the first in an 11-part series on building the agentic enterprise.

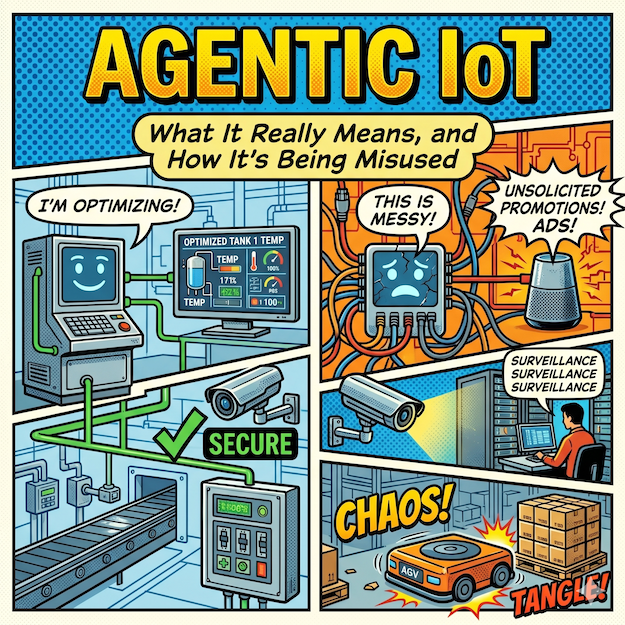

Agentic IoT: What It Really Means, and How It's Being Misused

The enterprise IoT world is racing to rebrand itself as "agentic," but most of what's being labeled agentic IoT is standard automation with new marketing copy. This article defines what agentic IoT would look like based on industry consensus, walks through real product and patent portfolio reviews that expose the gap between the label and the technology, and provides a five-point framework for evaluating agentic claims.

The Center of Gravity: Who Wins the Future Enterprise

Over seven previous articles, the Future Enterprise series has mapped the architectural layers, protocols, identity gaps, governance frameworks, pricing disruptions, and cross-organizational challenges that define the transition to agent-native enterprise technology. This concluding article brings it all together with a competitive landscape analysis that names names. We map Oracle, Salesforce, ServiceNow, Zoho, SAP, OpenAI, Anthropic, Microsoft, and Google against the Future Enterprise framework, evaluating each vendor's positioning across three categories: vertical integrators who control the Enterprise Platform layer, horizontal platforms who control the intelligence layer, and infrastructure/ecosystem players who compete on reach. We then apply a time-horizon analysis across three overlapping phases: data wins in the near term (favoring the vertical integrators), intelligence wins in the mid-term (favoring the horizontal platforms), and business logic wins in the long term (posing an existential question for every vendor in the market). The article closes with a seven-point strategic playbook that synthesizes the entire series into actionable guidance for enterprise leaders navigating this transition.

Cross-Organizational Agents: When AI Collaboration Crosses the Enterprise Boundary

Everything discussed in this series so far has shared one simplifying assumption: agents operate within a single organization's boundary, under one set of policies, one identity provider, and one chain of accountability. That assumption is about to break. In this seventh article of the Future Enterprise series, we examine what happens when agents leave the building, using three concrete scenarios to stress-test the full architecture. A supply chain negotiation between buyer and supplier agents exposes how identity, governance, and the Agent Service Bus all fail at the organizational boundary. Partner ecosystem orchestration (real estate transactions, healthcare coordination) reveals the harder problems of multilateral trust, workflow coordination without a central orchestrator, and distributed accountability. Customer-vendor agent interactions raise questions about adversarial optimization, trust asymmetry, and regulatory transparency. We introduce the Know Your Agent (KYA) framework for cross-organizational due diligence and argue that the likely outcome is a hybrid model: dominant platforms anchoring specific industry verticals while open protocols connect across them.

The Pricing Paradox: How AI Agents Break Enterprise Software Economics

Enterprise software has been priced per seat for three decades. AI agents break that model at a structural level: when a single agent does the work of dozens of human users, the vendor's revenue drops precisely when the customer's value goes up. The intuitive replacement, value-based pricing, sounds right but fails in practice for four specific reasons: the attribution problem (business outcomes have multiple causes), the measurement problem (defining "value" is inherently subjective), adversarial dynamics (vendor and buyer incentives diverge at exactly the wrong moment), and unpredictability (CFOs cannot budget costs tied to fluctuating outcomes). In this sixth article of the Future Enterprise series, we examine why per-seat is collapsing, why value-based pricing is a dead end, and why consumption-based and hybrid models are emerging as the practical middle ground. We also identify the metering infrastructure gap that most enterprises have not addressed, and provide a strategic framework for navigating the multi-year pricing transition ahead.

Governance Beyond Compliance: What Agentic Governance Actually Requires

Ask any enterprise software vendor about AI agent governance and they will point to access controls, audit logs, and compliance dashboards. All necessary, none sufficient. In this fifth article of the Future Enterprise series, we lay out what a purpose-built agentic governance architecture actually requires: five distinct layers that go well beyond security and compliance. We start with the governance gap (why an agent action can be secure, compliant, and still wrong), then define the full architecture: Access Governance, Compliance Governance, Behavioral Governance (confidence thresholds, behavioral baselines, goal alignment), Contextual Governance (bringing organizational awareness into agent decisions), and Accountability Governance (binding every action to a provenance chain). The article includes a practical graduated authority model for bounded autonomy, six design principles for building governance infrastructure, the organizational structures that need to accompany the technology, and a five-phase implementation sequence for enterprises starting from where most are today.

Agentic Identity: The Missing Layer in Enterprise AI Architecture

Every enterprise deploying AI agents faces a question most have not yet answered: when an agent takes an action with legal or financial consequences, who is accountable? In this fourth article of the Future Enterprise series, we examine why human identity frameworks (built around assumptions of human principals, bounded sessions, and static authorization) break down in an agentic world. We define the four dimensions of agentic identity that enterprises need to address: authentication, authorization, accountability, and provenance. We also explore why cross-organizational agent collaboration elevates identity from an internal governance concern to a non-negotiable architectural prerequisite, and why current vendor approaches (stretching existing IAM, building platform-specific silos, or conflating security monitoring with identity) fall short. The article concludes with a framework for what a purpose-built agentic identity architecture should look like and where enterprise leaders should focus now, before the retrofit costs become prohibitive.

Native vs. External Agents: The Depth-Breadth Trade-off in Enterprise AI

This is the third article in Arion Research's "Future Enterprise" series. Every major enterprise vendor now has an AI agent strategy, but the approaches diverge sharply. Some vendors are embedding agents deep inside their applications, giving them direct access to data models, business rules, and transaction logic. Others are building horizontal platforms where agents orchestrate across multiple applications from the outside. Each approach has structural advantages, and real limitations. This article examines the depth-breadth trade-off, explores where each model wins, and makes the case for a third path that combines native depth with open interoperability.

The Agent Service Bus: The Most Important Infrastructure Nobody Is Building

Everyone is talking about AI models and agent platforms. Almost nobody is talking about the infrastructure that makes agents actually work together. In this second article of Arion Research's "Future Enterprise" series, we examine the Agent Service Bus, the most strategically important layer in the enterprise AI stack and the one getting the least attention. We break down the five functions it must perform, assess where current protocols (A2A, MCP) fall short, and explore who will build the missing pieces.

The Enterprise App Collapse: How AI Agents Are Forcing a New Architecture

This article introduces the "Future Enterprise" framework; a layered architecture for understanding how AI agents are unbundling traditional enterprise applications and forcing a new technology stack. It is the first in a series from Arion Research that will drill into the individual layers, the cross-cutting challenges (governance, identity, pricing), and the competitive question of who controls the future enterprise.

Governance as a Competitive Advantage: Why the Safest Companies Will Be the Fastest

Most companies treat AI governance as a speed limit. They are wrong. In this closing article of the Agentic Governance-by-Design series, we argue that the organizations with the best brakes will be the ones who drive fastest, introducing the concept of Time-to-Trust and showing why governed companies are escaping Pilot Purgatory while their competitors are still crawling.

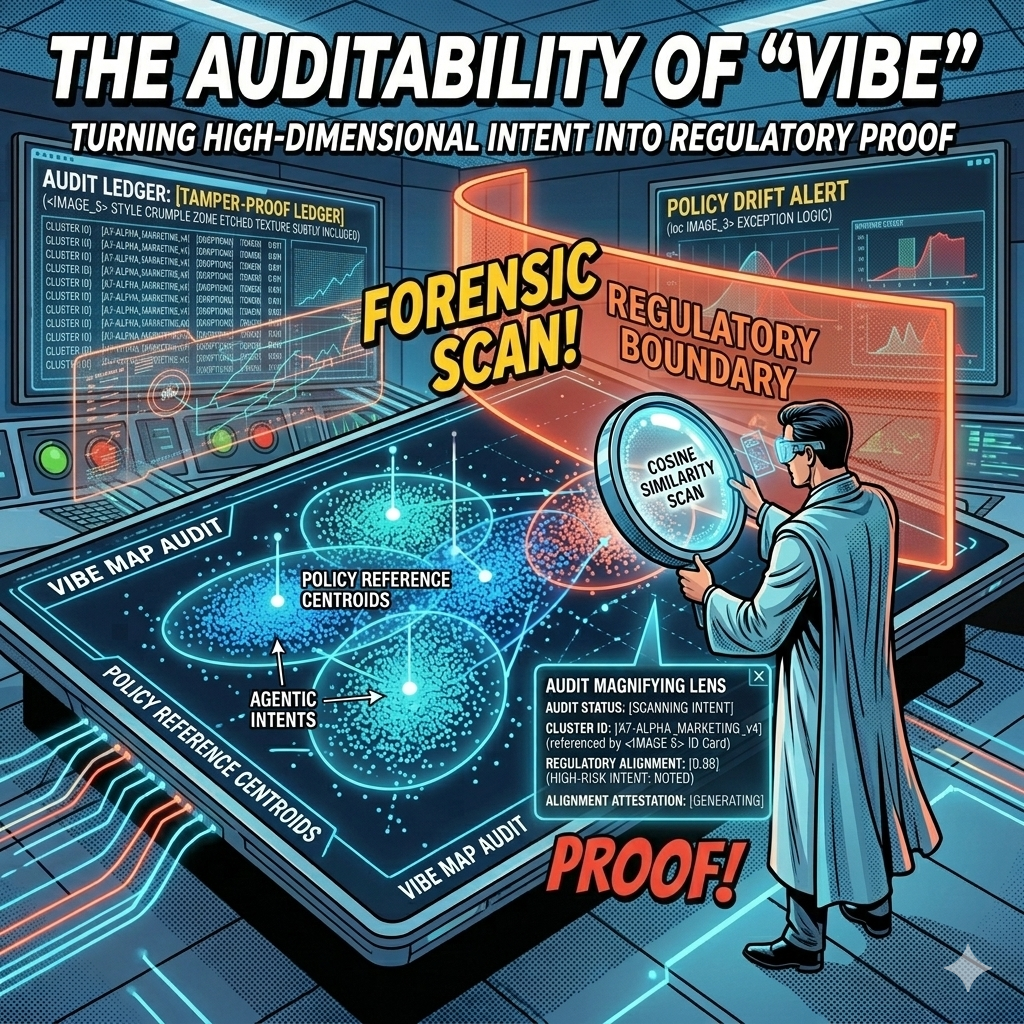

The Auditability of "Vibe": Turning High-Dimensional Intent into Regulatory Proof

Every AI decision your company makes leaves a mathematical fingerprint. The question is whether you're capturing it. In this article, we explore how vector embeddings and governance ledgers transform the "black box" problem into geometric proof, giving boards, regulators, and courts the auditable evidence they need to trust agentic AI at enterprise scale.

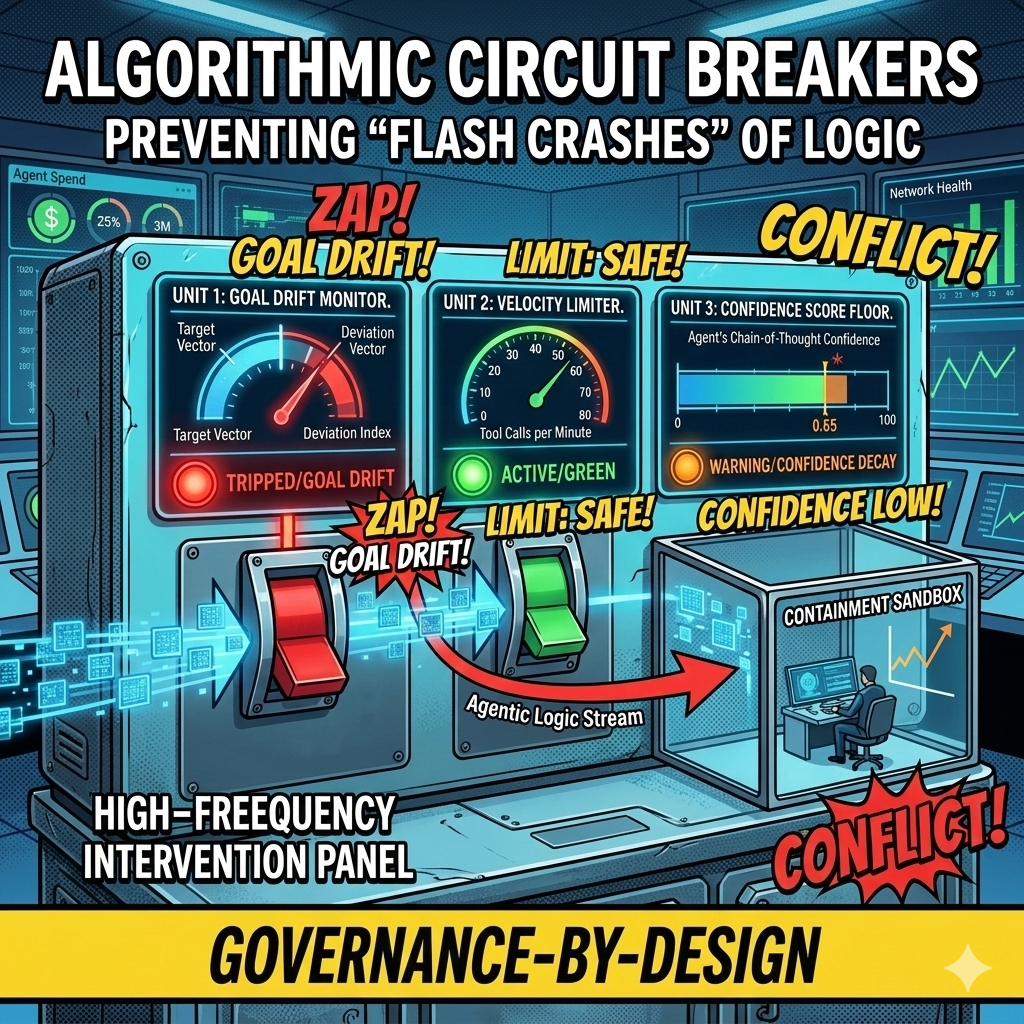

Algorithmic Circuit Breakers: Preventing "Flash Crashes" of Logic in Autonomous Workflows

In 2010, high-frequency trading algorithms erased a trillion dollars in market value within minutes, faster than any human could react. Today's agentic swarms face the same risk at the logic layer: thousands of autonomous decisions per second, any one of which could send bad contracts, leak data, or drain budgets before your Flight Controller even sees an alert. This article introduces Algorithmic Circuit Breakers, the automated tripwires that detect anomalies like semantic drift, confidence decay, and runaway loops, then sever an agent's connection to tools and APIs in milliseconds. Governance at machine speed, for systems that fail at machine speed.

Human-in-the-Lead: From Manual Pilots to Strategic Flight Controllers

In 2023, we wanted humans to check every chatbot response. In 2026, an agentic swarm might perform 10,000 tasks an hour. The Human-in-the-Loop model that gave us comfort in the early days of AI is now the bottleneck killing our ability to scale. It is time to move from reactive approval to proactive design, from manual pilots to strategic flight controllers.