Depth Over Breadth: Why General AI is Stalling and Vertical AI is Booming

The Plateau of "Good Enough"

The magic of 2023 and 2024 was undeniable. Large language models burst onto the scene, capable of writing poetry, coding basic websites, and holding surprisingly coherent conversations. For a moment, it seemed like artificial general intelligence was just around the corner.

But then organizations started asking harder questions. Could these models reliably diagnose a rare disease? Navigate complex supply chain compliance? Draft a legally binding contract that accounted for jurisdiction-specific regulations?

The answer was a resounding "not yet."

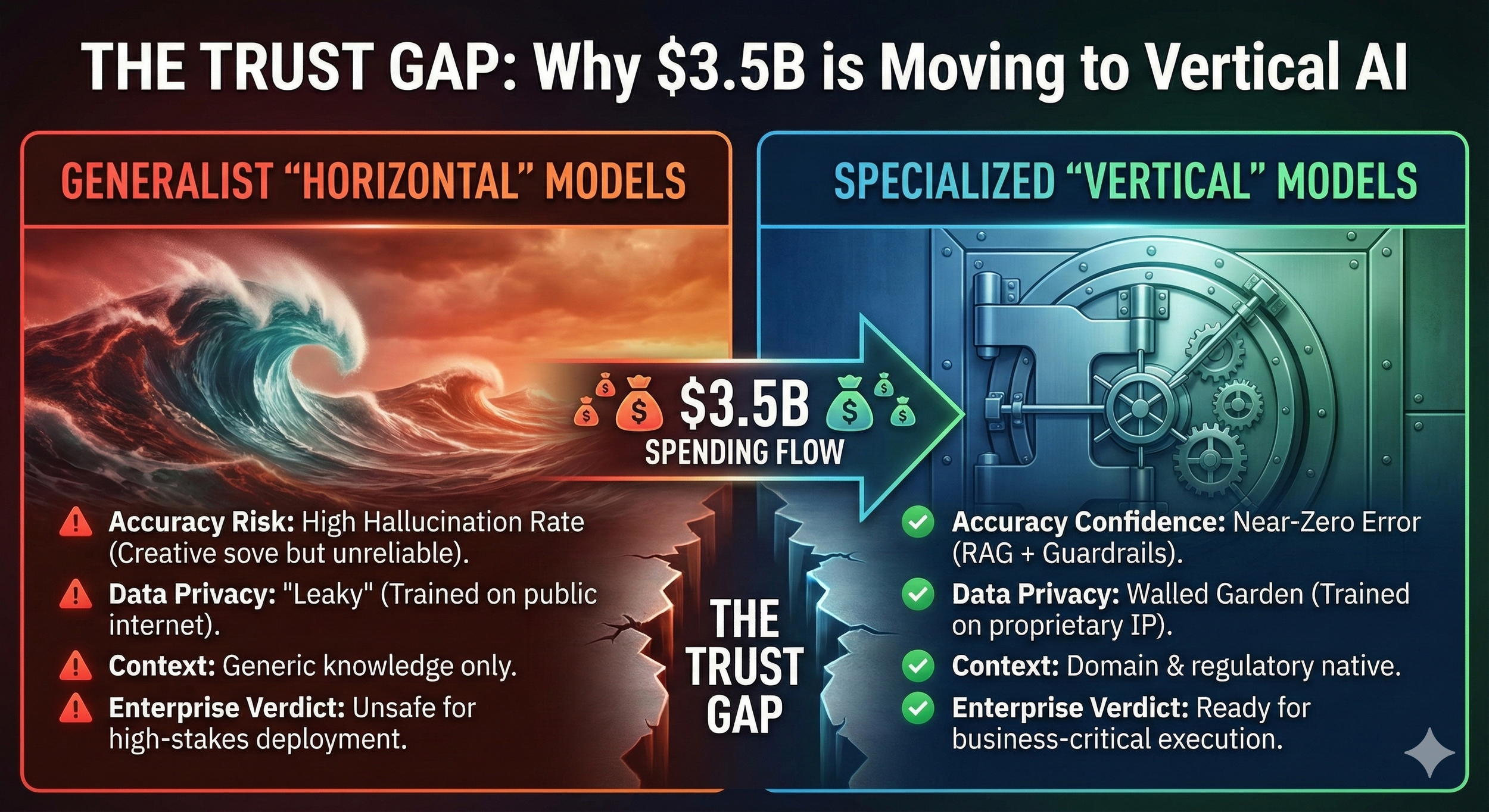

The shift happening now is profound. Businesses are no longer impressed by conversation. They demand execution. And in 2025, the market is voting with its wallet: $3.5 billion in specialized AI spending. This isn't hype or speculative investment. This is a capital allocation shift that signals where real business value gets unlocked.

The thesis is straightforward: Horizontal AI is the operating system. Vertical AI is the application layer.

The Problem with Horizontal AI: The "Jack of All Trades" Trap

General models like GPT-4 and Gemini are trained on the average of the internet. This gives them remarkable breadth, but it also creates critical limitations when enterprises need depth.

Hallucinations in High Stakes

A 95% accuracy rate works fine for drafting an email. It becomes fatal when you're reviewing a legal contract or making a medical diagnosis. The difference between "mostly right" and "always right" isn't academic in regulated industries. It's the difference between deployment and liability.

Lack of Context

A general model doesn't know your company's specific legacy codebase. It can't navigate the nuances of a new SEC regulation that dropped last week. It doesn't understand that in your organization, "complete" means something different than it does in standard project management vocabulary.

Data Privacy

Organizations remain wary of piping proprietary data into public, generalist models. The question isn't whether the model is impressive. The question is whether you trust it with your competitive advantage.

Created with Google Nano Banana Pro

The Three Pillars of the Vertical Case

Where is that $3.5 billion actually going? Three core advantages explain why enterprises are choosing depth over breadth.

The Data Moat (Depth)

Vertical AI is trained on proprietary, scarce data that general models simply cannot access. Oil and gas companies are building models on 20 years of seismic data. Healthcare organizations are training on annotated pathology slides that took decades to accumulate.

This creates an impenetrable competitive advantage. General models can't compete because they don't have the training dataset. The data moat becomes the business moat.

Regulatory & Compliance (Trust)

Vertical models can be hard-coded with guardrails specific to industries. HIPAA compliance for healthcare. FINRA regulations for finance. These aren't features you can bolt on after the fact. They're baked into the architecture.

This lowers the barrier to adoption in regulated industries. IT and legal teams can actually say "yes" because the compliance framework is already built in.

Workflow Integration (Utility)

Horizontal AI typically offers a chat interface. Vertical AI is increasingly agentic. It performs actions.

Instead of "tell me about this insurance claim," the vertical model reviews the claim, checks it against policy X, and approves the payout. It moves from system of record to system of action.

Industry Spotlights: The $3.5B Breakdown

Healthcare: From "Wellness Advice" to Clinical Safety

General LLMs are too risky for direct patient interaction. The liability exposure is simply too high. Vertical AI is being deployed with strict clinical safety supervisors built into the architecture.

Hippocratic AI has deployed specialized agents like "Rachel" for chronic care management. Unlike a generic chatbot, these agents have specific escalation protocols. If a patient slurs their speech or mentions a conflicting medication, the AI instantly flags a human nurse. There's no probabilistic judgment call. The guardrails are absolute.

Aidoc is now standard in many radiology departments, running in the background to analyze CT scans for intracranial hemorrhages or pulmonary embolisms. It prioritizes life-threatening cases for radiologists to review first. The ROI isn't measured in cost savings. It's measured in lives saved through faster triage.

In healthcare, vertical AI isn't replacing the doctor. It's becoming the always-on resident that never sleeps.

Legal: The End of the "Billable Hour" Model?

Law firms are moving from experimenting with ChatGPT to deploying walled garden models trained on centuries of case law and their own private archives.

Harvey, backed by the OpenAI Startup Fund, has partnered with major law firms. The platform has effectively "read" every case law in existence. It doesn't just write a contract. It drafts specific clauses based on the jurisdiction's latest regulatory changes. The output isn't a suggestion. It's a compliance-ready document.

Clio's "Vincent" AI reportedly increases research productivity by 38% by ensuring every output is cited with verifiable case law. This eliminates the hallucination risk that plagues general models. When the AI cites a precedent, you can trust the citation is real.

Legal AI is moving from drafting helper to compliance guarantor.

Manufacturing: The "Acoustic" Revolution

The frontier in manufacturing AI isn't visual inspection anymore. It's listening to machines.

Predictive maintenance models are now trained on the specific acoustic and vibration signatures of rotating machinery like turbines and pumps. AI detects bearing pass frequencies, tiny vibration changes that signal a failure is weeks away. This allows maintenance teams to replace a $50 part during a lunch break rather than suffering a $2M line shutdown.

Defect detection on CNC mills is now automated, with AI monitoring thermal behavior and tool wear in real-time. The system adjusts tolerance before a part is ruined.

Manufacturing AI has moved from identifying defects to preventing them.

Finance: The "Agentic" Banker

Banks are done with FAQ chatbots. They're spending on agentic AI that can take action.

Fintechs are deploying AI that autonomously reconciles complex transactions. In construction finance, AI agents now review subcontractor invoices against project completion benchmarks, a task that previously required hours of human cross-referencing.

Fraud detection has evolved from static rules to dynamic behavioral modeling. New vertical models understand the specific transaction fingerprint of a single user. They flag anomalies like a transfer that doesn't match the user's typical mouse movement or login speed. These are signals that general models would miss entirely.

Finance AI is shifting from system of record to system of action.

The Future: The "Governed Vertical Mesh"

Created with Google Nano Banana Pro

From "God Mode" to "Team of Rivals"

We're moving away from the idea of a single, omniscient model solving everything. The fantasy of GPT-6 as a universal problem-solver is fading.

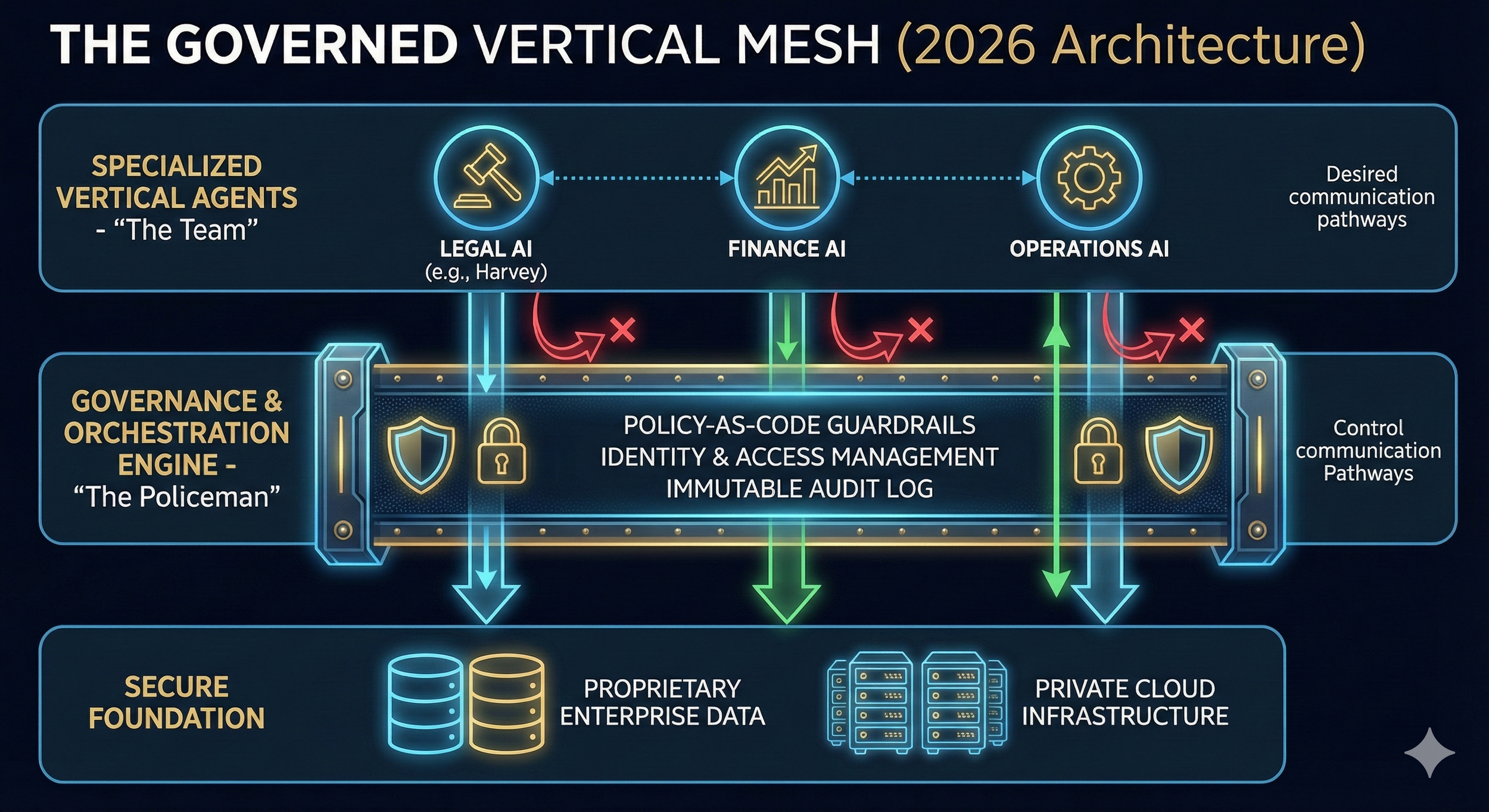

The enterprise architecture of 2026 will look like a corporate org chart. You'll have a Legal Agent (Harvey), a Coding Agent (GitHub Copilot), and a Supply Chain Agent (a specialized vertical model). These agents will need to talk to each other to complete complex workflows.

The Sales Agent closes a deal, which triggers the Legal Agent to draft the contract, which triggers the Finance Agent to issue the invoice. This is the mesh.

The Challenge: The "Wild West" of Agent Interoperability

Without governance, a mesh is just chaos.

If the Marketing AI asks the HR AI for employee salaries to optimize a campaign, does the HR AI say yes? In a standard API call, it might. The technical connection works. But the policy violation is catastrophic.

Current orchestration tools focus on connecting pipes. They don't police them.

The Solution: Governance by Design

We must treat AI agents like employees, not software. This requires a constitutional layer.

Zero Trust for Agents

Just because the Sales Agent can call the Database Agent doesn't mean it has permission to see personally identifiable information. Identity and access management for AI is non-negotiable.

Least Privilege Access

Agents should only access the data strictly necessary for the specific task at hand. Nothing more.

Policy-as-Code

“Decoding “Policy-as-Code”: Governing the Digital Employee

In the past, corporate governance meant a 200-page Employee Handbook (PDF) that human employees were supposed to read and follow. If a human sales rep offered an unauthorized 25% discount, a manager would catch it later and reprimand them.

AI agents do not read employee handbooks.

”Policy-as-Code” is the critical shift from written guidelines for humans to programmable constraints for software. It means taking your corporate rules; legal compliance, discount limits, data access restrictions; and translating them into hard-coded digital guardrails that the AI cannot bypass.

In a “Policy-as-Code” environment, the AI Sales Agent isn’t just told not to offer a 25% discount; it is technologically incapable of sending that email without triggering an automatic approval workflow. It’s not asking the AI to behave; it’s engineering the environment so it has no choice.”

Governance cannot be a PDF policy document that humans read. It must be hard-coded into the orchestration layer.

Imagine a guardrail layer that intercepts the message between the Sales AI and the client. If the Sales AI promises a discount greater than 15%, the governance layer blocks the message and reroutes it to a human manager for approval. Automatically.

The "Black Box" Recorder (Auditability)

When three different vertical AIs collaborate to make a decision, who is liable?

The governed mesh requires an immutable audit trail that logs not just the outcome, but the negotiation between agents. "Why was this loan denied? Because the Risk Agent overruled the Sales Agent at timestamp 12:01:03."

The CIO's Mandate: Architect vs. Purchaser

The role of the CIO is shifting from buying SaaS licenses to architecting the mesh.

The $3.5 billion spent in 2025 creates the specialists. The trillion-dollar opportunity in 2026 lies in the orchestration and governance layer. The software that allows these specialists to work together safely, legally, and effectively.

That's where the real value gets created.