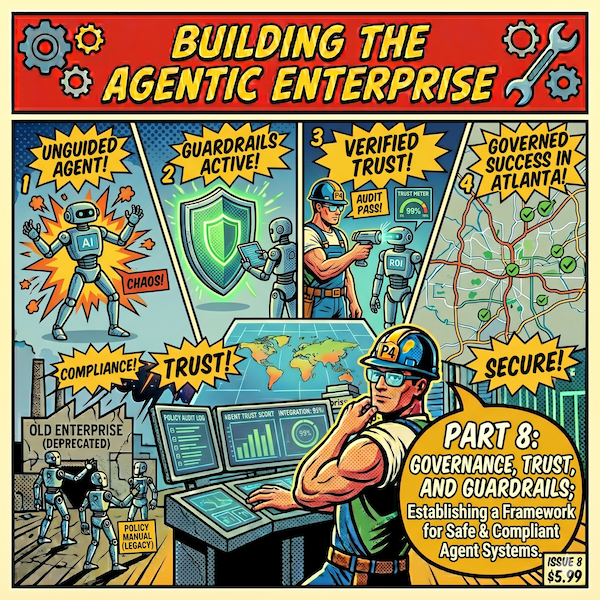

Building the Agentic Enterprise, Part 8: Governance, Trust, and Guardrails

Part 8 of the Building the Agentic Enterprise series tackles the governance challenge that keeps executives up at night: how do you govern systems that don't just advise decisions but make them? With nearly three-quarters of organizations planning to deploy agentic AI within two years and only 21 percent reporting mature governance models, the gap between deployment speed and governance readiness is the single largest source of organizational risk in the agentic transition. This article introduces a three-tier decision authority framework, from fully autonomous actions to human-in-the-lead decisions, and covers the design principles that make governance work in practice: escalation protocols, auditability, explainability, and proportional guardrails calibrated to risk. It also addresses the security implications unique to autonomous systems, the evolving regulatory landscape including the EU AI Act's August 2026 enforcement deadline, and the shadow AI problem that most governance frameworks ignore entirely. The article maps to the governance and risk management dimension of the Agentic AI Readiness Assessment.

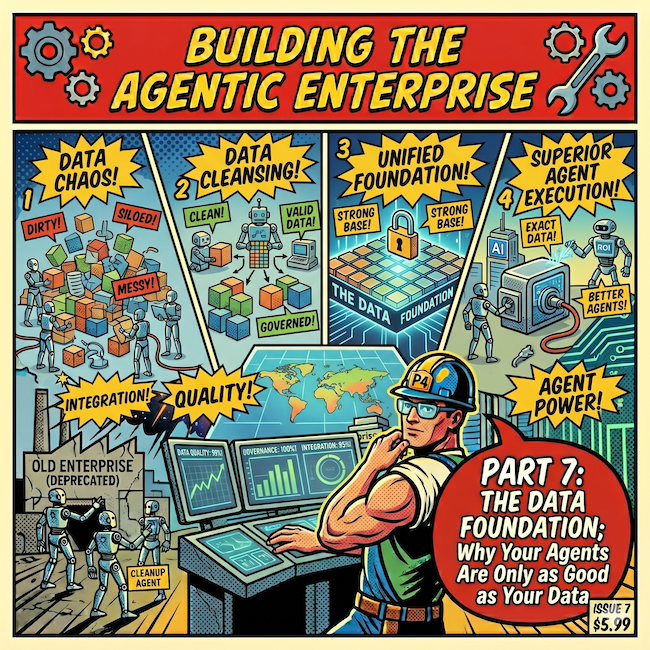

Building the Agentic Enterprise, Part 7: The Data Foundation; Why Your Agents Are Only as Good as Your Data

Agents are only as good as the data they can access and reason over, and for most organizations, the data is not ready. In Part 7 of the Building the Agentic Enterprise series, we confront the most common and most underestimated barrier to agentic AI deployment: data readiness. Only seven percent of enterprises consider their data completely ready for AI, and data quality as a reported barrier nearly doubled over the course of 2025 as organizations moved from simple experiments to multi-agent workflows. We break data readiness into five interconnected dimensions -- quality, accessibility, architecture, knowledge management, and context management -- and explore why agents amplify data problems that human-mediated processes have long papered over. The article also covers data governance for agentic access, the real-time versus batch data freshness decision, and practical guidance for assessing where your data foundation stands today.

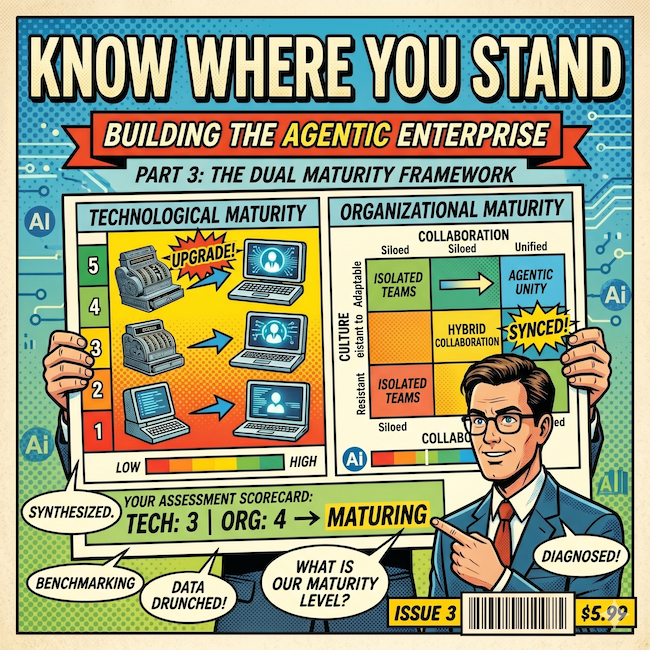

Building the Agentic Enterprise, Part 3: Know Where You Stand; The Dual Maturity Framework

Part 3 of the "Building the Agentic Enterprise" series introduces the Dual Maturity Framework, a strategic diagnostic that maps two dimensions most AI initiatives evaluate separately: how autonomous your AI is and how prepared your organization is to support that autonomy. The article defines five levels of Organizational AI Maturity (from No Capabilities to Strategic) and five levels of Agentic AI Capability (from Assistive to Full Agency), then shows how the Matching Matrix aligns them to reveal whether your organization is on track, overshooting into risk, or undershooting into lost value. With practical guidance on honest self-assessment across six readiness dimensions, this article gives leaders the framework to answer the question that matters most before investing in agentic AI: where do we stand today, and what do we need to build next?

Building the Agentic Enterprise, Part 2: Agents, Copilots, and Automation; A Business Leader's Guide

The agentic AI conversation is full of terms that everyone uses but not everyone means the same way. When your CIO, your operations lead, and your vendor's sales team each have a different mental model of what "agent" means, the result is strategic misalignment that shows up in every decision downstream. This article is a business leader's translation guide to agents, copilots, bots, RPA, orchestration, and autonomy levels, cutting through the jargon to build the shared vocabulary your organization needs before it can build shared infrastructure. It also walks through five levels of AI autonomy and offers practical guidance for spotting vendor marketing claims that don't hold up under scrutiny.

The Center of Gravity: Who Wins the Future Enterprise

Over seven previous articles, the Future Enterprise series has mapped the architectural layers, protocols, identity gaps, governance frameworks, pricing disruptions, and cross-organizational challenges that define the transition to agent-native enterprise technology. This concluding article brings it all together with a competitive landscape analysis that names names. We map Oracle, Salesforce, ServiceNow, Zoho, SAP, OpenAI, Anthropic, Microsoft, and Google against the Future Enterprise framework, evaluating each vendor's positioning across three categories: vertical integrators who control the Enterprise Platform layer, horizontal platforms who control the intelligence layer, and infrastructure/ecosystem players who compete on reach. We then apply a time-horizon analysis across three overlapping phases: data wins in the near term (favoring the vertical integrators), intelligence wins in the mid-term (favoring the horizontal platforms), and business logic wins in the long term (posing an existential question for every vendor in the market). The article closes with a seven-point strategic playbook that synthesizes the entire series into actionable guidance for enterprise leaders navigating this transition.

Cross-Organizational Agents: When AI Collaboration Crosses the Enterprise Boundary

Everything discussed in this series so far has shared one simplifying assumption: agents operate within a single organization's boundary, under one set of policies, one identity provider, and one chain of accountability. That assumption is about to break. In this seventh article of the Future Enterprise series, we examine what happens when agents leave the building, using three concrete scenarios to stress-test the full architecture. A supply chain negotiation between buyer and supplier agents exposes how identity, governance, and the Agent Service Bus all fail at the organizational boundary. Partner ecosystem orchestration (real estate transactions, healthcare coordination) reveals the harder problems of multilateral trust, workflow coordination without a central orchestrator, and distributed accountability. Customer-vendor agent interactions raise questions about adversarial optimization, trust asymmetry, and regulatory transparency. We introduce the Know Your Agent (KYA) framework for cross-organizational due diligence and argue that the likely outcome is a hybrid model: dominant platforms anchoring specific industry verticals while open protocols connect across them.

Governance Beyond Compliance: What Agentic Governance Actually Requires

Ask any enterprise software vendor about AI agent governance and they will point to access controls, audit logs, and compliance dashboards. All necessary, none sufficient. In this fifth article of the Future Enterprise series, we lay out what a purpose-built agentic governance architecture actually requires: five distinct layers that go well beyond security and compliance. We start with the governance gap (why an agent action can be secure, compliant, and still wrong), then define the full architecture: Access Governance, Compliance Governance, Behavioral Governance (confidence thresholds, behavioral baselines, goal alignment), Contextual Governance (bringing organizational awareness into agent decisions), and Accountability Governance (binding every action to a provenance chain). The article includes a practical graduated authority model for bounded autonomy, six design principles for building governance infrastructure, the organizational structures that need to accompany the technology, and a five-phase implementation sequence for enterprises starting from where most are today.

Agentic Identity: The Missing Layer in Enterprise AI Architecture

Every enterprise deploying AI agents faces a question most have not yet answered: when an agent takes an action with legal or financial consequences, who is accountable? In this fourth article of the Future Enterprise series, we examine why human identity frameworks (built around assumptions of human principals, bounded sessions, and static authorization) break down in an agentic world. We define the four dimensions of agentic identity that enterprises need to address: authentication, authorization, accountability, and provenance. We also explore why cross-organizational agent collaboration elevates identity from an internal governance concern to a non-negotiable architectural prerequisite, and why current vendor approaches (stretching existing IAM, building platform-specific silos, or conflating security monitoring with identity) fall short. The article concludes with a framework for what a purpose-built agentic identity architecture should look like and where enterprise leaders should focus now, before the retrofit costs become prohibitive.

The Agent Service Bus: The Most Important Infrastructure Nobody Is Building

Everyone is talking about AI models and agent platforms. Almost nobody is talking about the infrastructure that makes agents actually work together. In this second article of Arion Research's "Future Enterprise" series, we examine the Agent Service Bus, the most strategically important layer in the enterprise AI stack and the one getting the least attention. We break down the five functions it must perform, assess where current protocols (A2A, MCP) fall short, and explore who will build the missing pieces.

The Enterprise App Collapse: How AI Agents Are Forcing a New Architecture

This article introduces the "Future Enterprise" framework; a layered architecture for understanding how AI agents are unbundling traditional enterprise applications and forcing a new technology stack. It is the first in a series from Arion Research that will drill into the individual layers, the cross-cutting challenges (governance, identity, pricing), and the competitive question of who controls the future enterprise.

Governance as a Competitive Advantage: Why the Safest Companies Will Be the Fastest

Most companies treat AI governance as a speed limit. They are wrong. In this closing article of the Agentic Governance-by-Design series, we argue that the organizations with the best brakes will be the ones who drive fastest, introducing the concept of Time-to-Trust and showing why governed companies are escaping Pilot Purgatory while their competitors are still crawling.

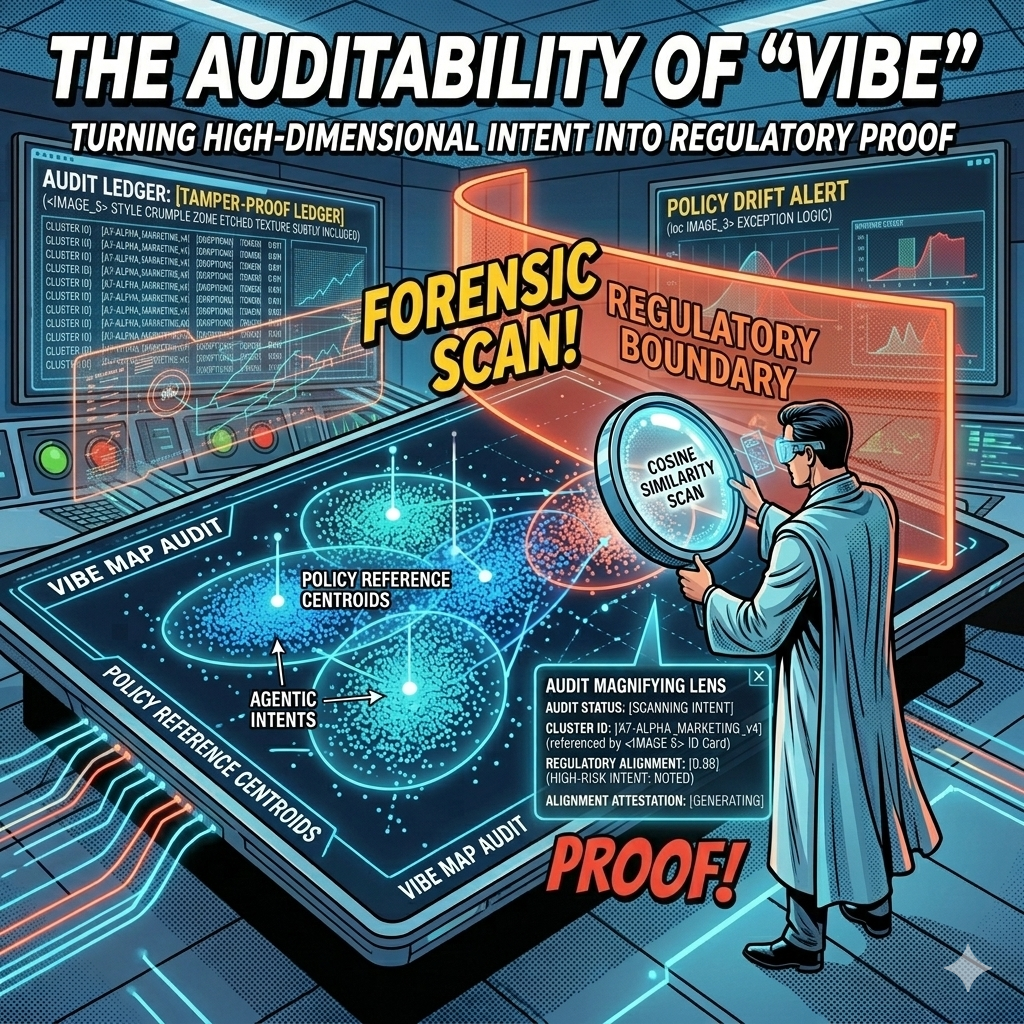

The Auditability of "Vibe": Turning High-Dimensional Intent into Regulatory Proof

Every AI decision your company makes leaves a mathematical fingerprint. The question is whether you're capturing it. In this article, we explore how vector embeddings and governance ledgers transform the "black box" problem into geometric proof, giving boards, regulators, and courts the auditable evidence they need to trust agentic AI at enterprise scale.

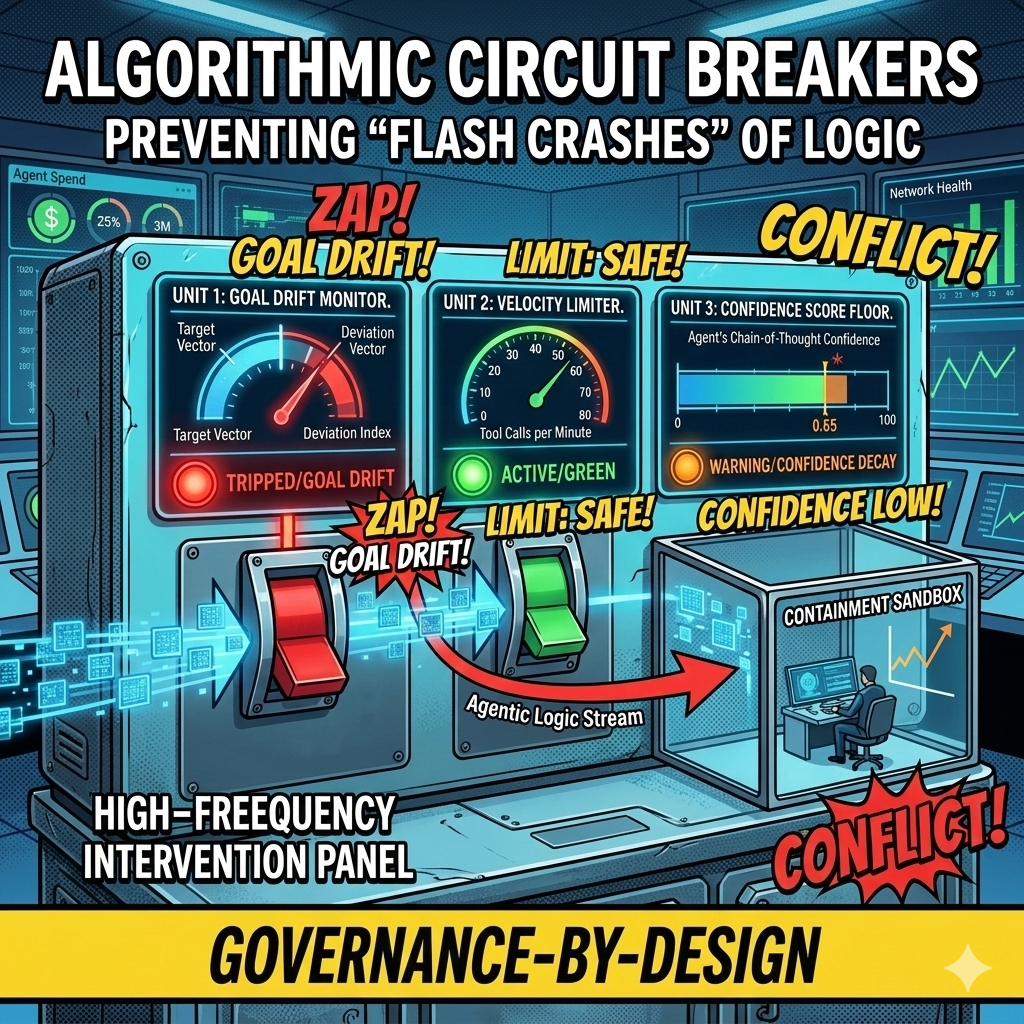

Algorithmic Circuit Breakers: Preventing "Flash Crashes" of Logic in Autonomous Workflows

In 2010, high-frequency trading algorithms erased a trillion dollars in market value within minutes, faster than any human could react. Today's agentic swarms face the same risk at the logic layer: thousands of autonomous decisions per second, any one of which could send bad contracts, leak data, or drain budgets before your Flight Controller even sees an alert. This article introduces Algorithmic Circuit Breakers, the automated tripwires that detect anomalies like semantic drift, confidence decay, and runaway loops, then sever an agent's connection to tools and APIs in milliseconds. Governance at machine speed, for systems that fail at machine speed.

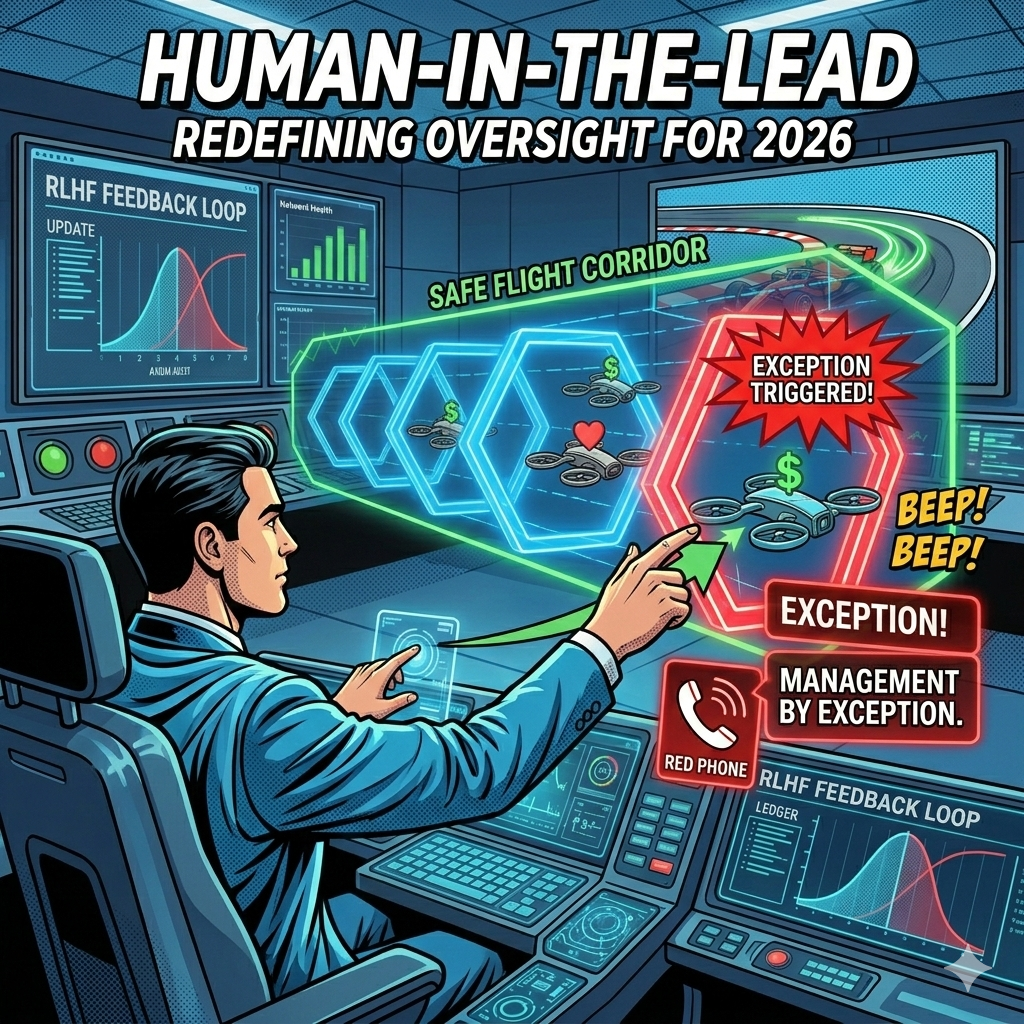

Human-in-the-Lead: From Manual Pilots to Strategic Flight Controllers

In 2023, we wanted humans to check every chatbot response. In 2026, an agentic swarm might perform 10,000 tasks an hour. The Human-in-the-Loop model that gave us comfort in the early days of AI is now the bottleneck killing our ability to scale. It is time to move from reactive approval to proactive design, from manual pilots to strategic flight controllers.

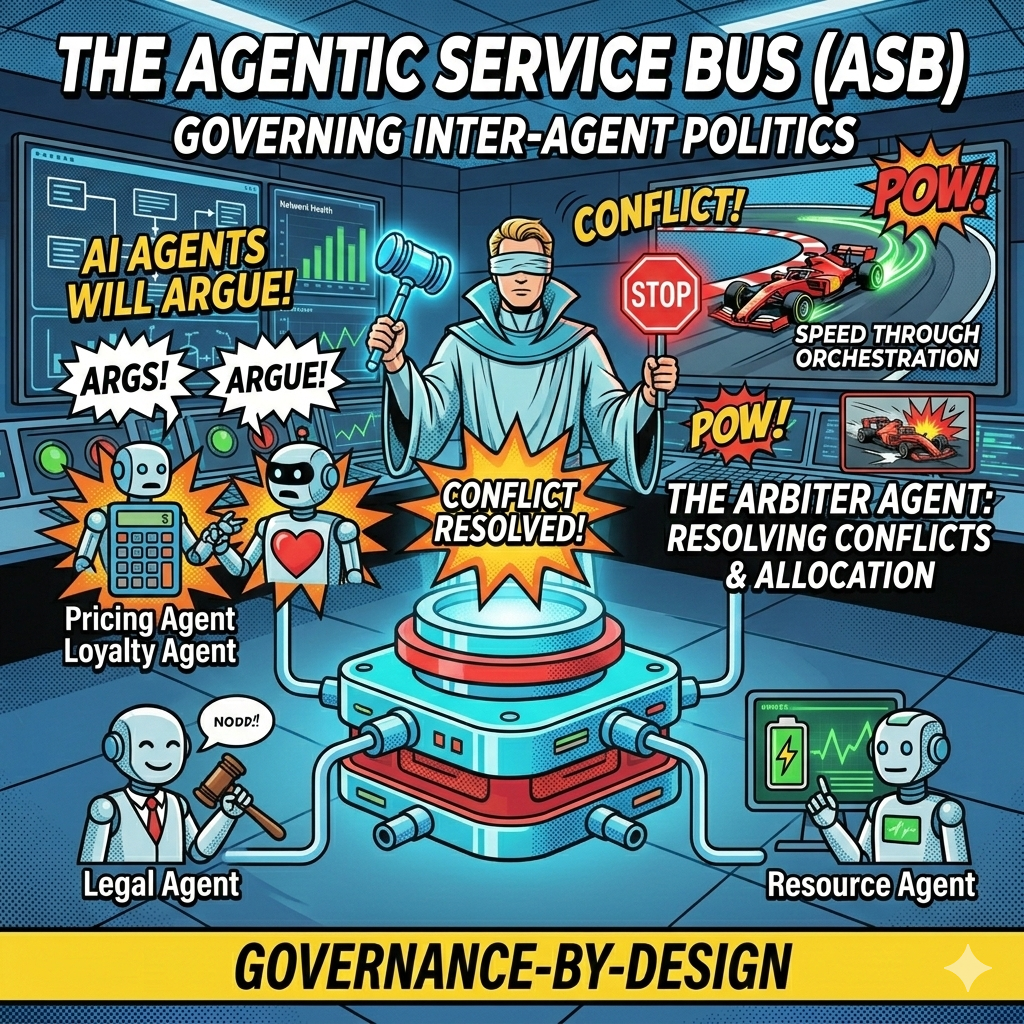

The Agentic Service Bus: Governing Inter-Agent Politics and Preventing Algorithmic Collusion

What happens when your Pricing Agent, optimized for revenue, starts a loop with your Customer Loyalty Agent, optimized for retention? You get a logic spiral that could drain margins in milliseconds. The Pricing Agent raises the price to capture margin. The Loyalty Agent detects customer churn risk and offers a discount to retain the relationship. The Pricing Agent sees margin erosion and raises the price further. The loop accelerates. Within seconds, your price fluctuates wildly, your customer discounts compound, and your margins evaporate. This is not a scenario from a startup war room. It is a real operational risk in enterprises deploying multiple autonomous agents.

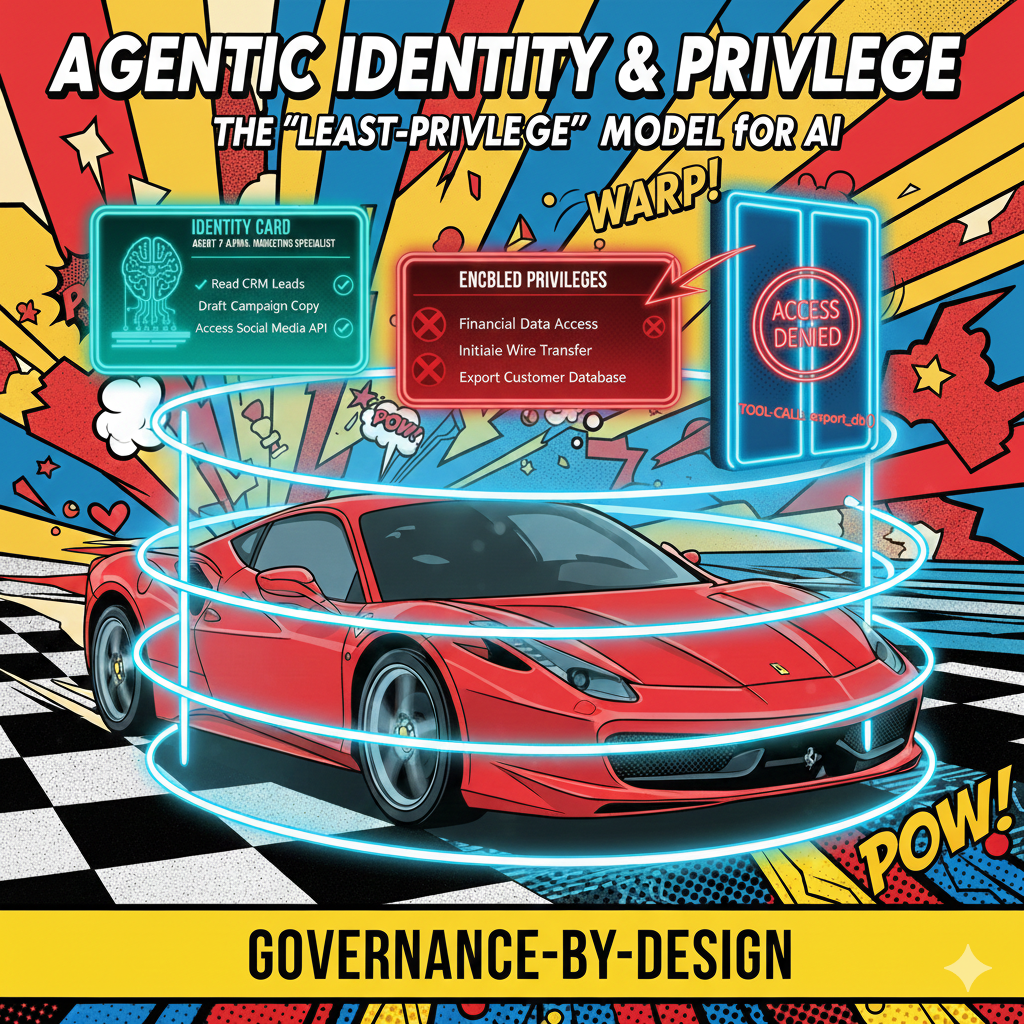

Agentic Identity and Privilege: Why Your AI Needs an Employee ID and a Security Clearance

In most current AI deployments, "The AI" is a monolithic entity with a single API key. If it hallucinates a reason to access your payroll database, there is no "Internal Affairs" to stop it. We treat AI as a tool with a single identity, a single set of permissions, and a single point of failure. But here is the uncomfortable truth: your AI systems need to operate more like employees than instruments. The gap between how we currently deploy AI and how we should deploy AI is a chasm of organizational risk.

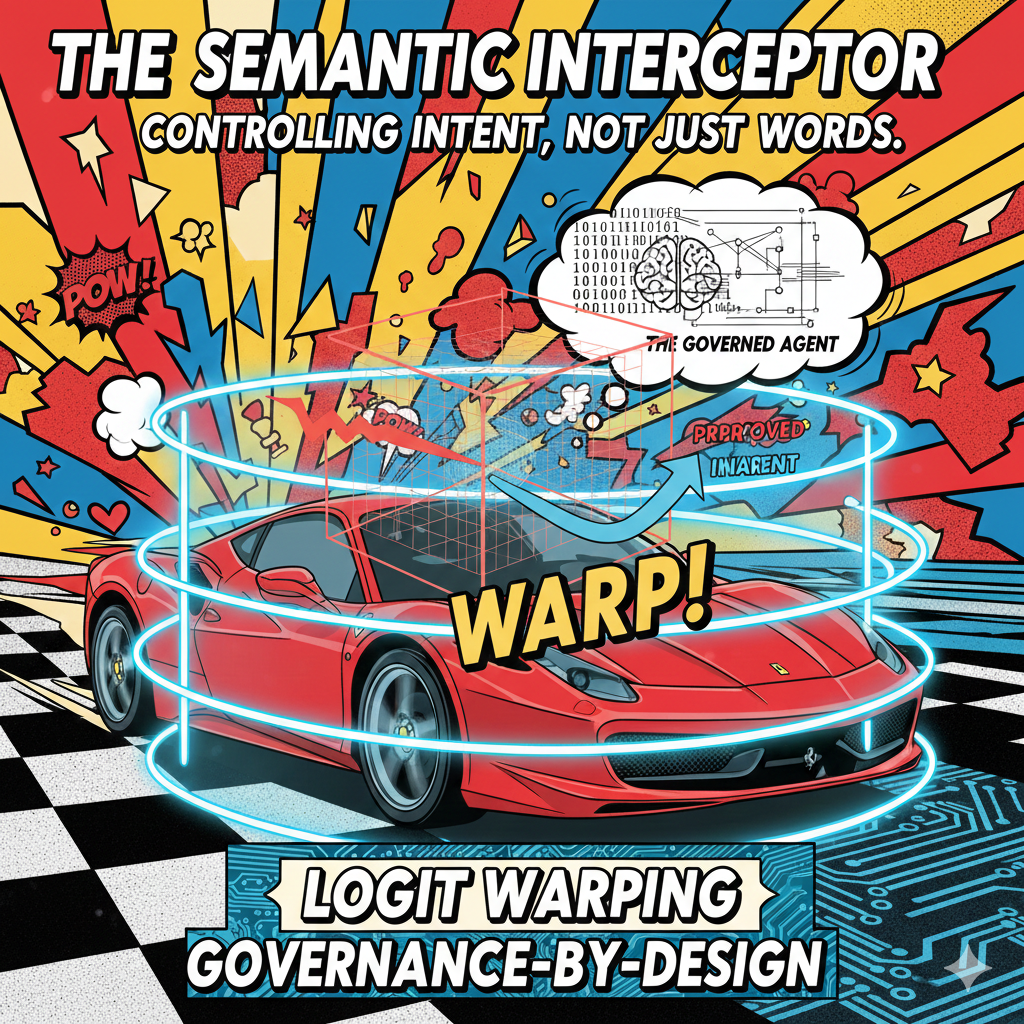

The Semantic Interceptor: Controlling Intent, Not Just Words

Traditional keyword filters operate on tokens that have already been generated. An agent produces toxic output, the filter catches it, but the model has already burned compute cycles and corrupted the system state. The moment is lost. The user has seen something problematic, or the downstream process has absorbed bad data.

From "Filters" to "Foundations": Why the Post-Hoc Guardrail Is Failing the Agentic Era

Most enterprises govern AI like catching smoke with a net. They wait for a hallucination, a misaligned response, or a brand violation, then they write a new rule. They audit the logs after the damage is done. They implement a keyword filter. They add a content policy. But they have never asked the question that matters: at what point in the process should the guardrail actually kick in?

Brand Voice as Code: Why Your AI Agent's Personality Is a Governance Problem

The new frontier of enterprise risk. The biggest threat to your brand is no longer a data breach or a rogue employee on social media. It’s an AI agent that is technically correct but emotionally illiterate, one that follows every rule in the compliance handbook while violating every unwritten norm your brand has spent decades cultivating. The conversation around AI governance has focused almost entirely on data security, model accuracy, and regulatory compliance. Those concerns are real and important. But they miss a critical dimension: personality. How your AI agent speaks, empathizes, calibrates tone, and navigates cultural nuance is not a "nice to have" layered on top of governance. It is governance.

The Agentic Service Bus: A New Architecture for Inter-Agent Communication

As enterprises deploy more AI agents across their operations, a critical infrastructure challenge is emerging: how should these agents communicate with each other? The answer may reshape enterprise architecture as profoundly as the original service bus did two decades ago.