Building the Agentic Enterprise, Part 5: The Orchestration Layer; Why Coordination Is the New Competitive Edge

Single-agent deployments deliver value, but they hit a ceiling when work requires coordination across multiple agents, systems, and people. This article explains orchestration in business terms: the layer that decides which agent does what, in what order, with what information, and what happens when something goes wrong. It covers four orchestration patterns (sequential, parallel, hierarchical, and event-driven), draws a clear distinction between human-in-the-loop and the more effective human-in-the-lead model, and addresses the observability challenge that consumes 30 to 40 percent of implementation effort in production deployments. The article surveys the emerging infrastructure landscape, from enterprise platforms to open frameworks and interoperability standards like Google's A2A and Anthropic's MCP. The "What It Takes" section focuses on technical infrastructure readiness: API readiness, system interoperability, identity and access management at agent scale, compute costs, and shared state management.

Agentic IoT: What It Really Means, and How It's Being Misused

The enterprise IoT world is racing to rebrand itself as "agentic," but most of what's being labeled agentic IoT is standard automation with new marketing copy. This article defines what agentic IoT would look like based on industry consensus, walks through real product and patent portfolio reviews that expose the gap between the label and the technology, and provides a five-point framework for evaluating agentic claims.

Governance Beyond Compliance: What Agentic Governance Actually Requires

Ask any enterprise software vendor about AI agent governance and they will point to access controls, audit logs, and compliance dashboards. All necessary, none sufficient. In this fifth article of the Future Enterprise series, we lay out what a purpose-built agentic governance architecture actually requires: five distinct layers that go well beyond security and compliance. We start with the governance gap (why an agent action can be secure, compliant, and still wrong), then define the full architecture: Access Governance, Compliance Governance, Behavioral Governance (confidence thresholds, behavioral baselines, goal alignment), Contextual Governance (bringing organizational awareness into agent decisions), and Accountability Governance (binding every action to a provenance chain). The article includes a practical graduated authority model for bounded autonomy, six design principles for building governance infrastructure, the organizational structures that need to accompany the technology, and a five-phase implementation sequence for enterprises starting from where most are today.

Agentic Identity: The Missing Layer in Enterprise AI Architecture

Every enterprise deploying AI agents faces a question most have not yet answered: when an agent takes an action with legal or financial consequences, who is accountable? In this fourth article of the Future Enterprise series, we examine why human identity frameworks (built around assumptions of human principals, bounded sessions, and static authorization) break down in an agentic world. We define the four dimensions of agentic identity that enterprises need to address: authentication, authorization, accountability, and provenance. We also explore why cross-organizational agent collaboration elevates identity from an internal governance concern to a non-negotiable architectural prerequisite, and why current vendor approaches (stretching existing IAM, building platform-specific silos, or conflating security monitoring with identity) fall short. The article concludes with a framework for what a purpose-built agentic identity architecture should look like and where enterprise leaders should focus now, before the retrofit costs become prohibitive.

The Agent Service Bus: The Most Important Infrastructure Nobody Is Building

Everyone is talking about AI models and agent platforms. Almost nobody is talking about the infrastructure that makes agents actually work together. In this second article of Arion Research's "Future Enterprise" series, we examine the Agent Service Bus, the most strategically important layer in the enterprise AI stack and the one getting the least attention. We break down the five functions it must perform, assess where current protocols (A2A, MCP) fall short, and explore who will build the missing pieces.

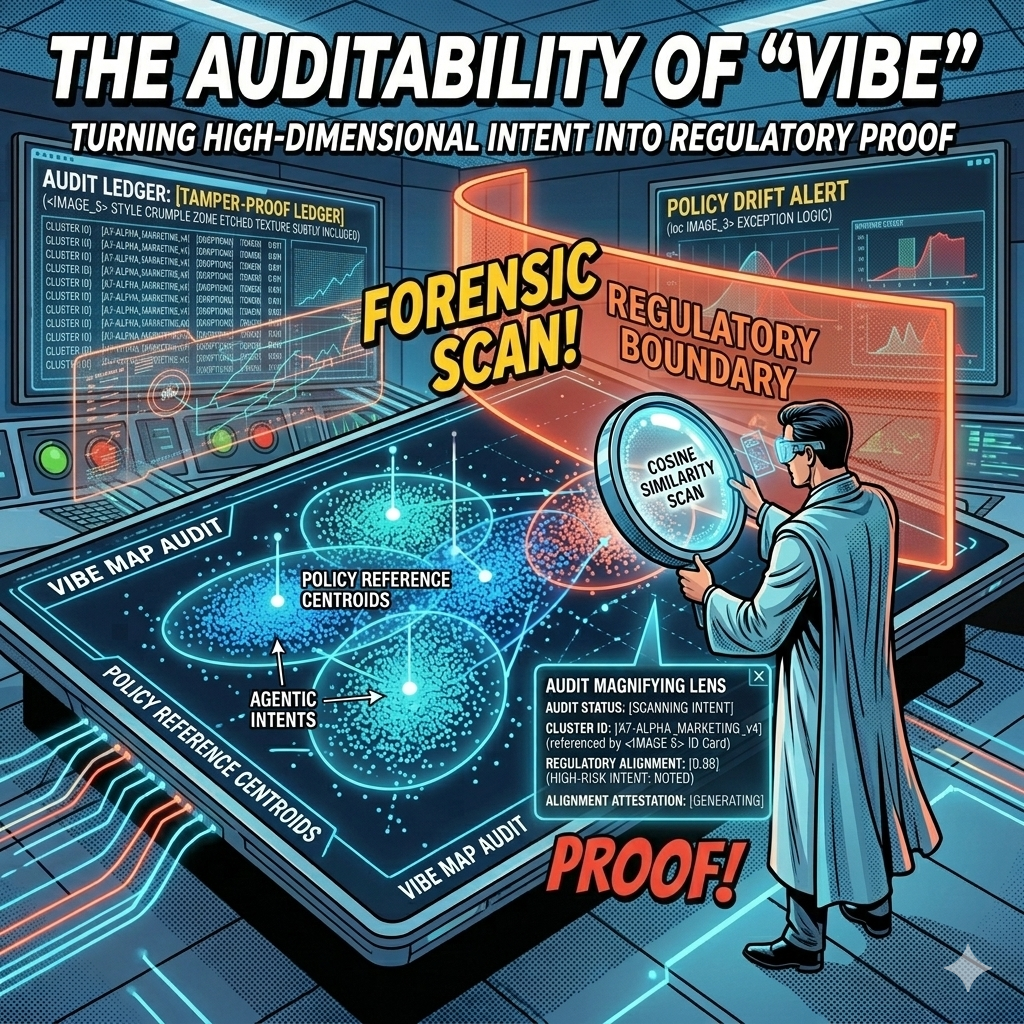

The Auditability of "Vibe": Turning High-Dimensional Intent into Regulatory Proof

Every AI decision your company makes leaves a mathematical fingerprint. The question is whether you're capturing it. In this article, we explore how vector embeddings and governance ledgers transform the "black box" problem into geometric proof, giving boards, regulators, and courts the auditable evidence they need to trust agentic AI at enterprise scale.

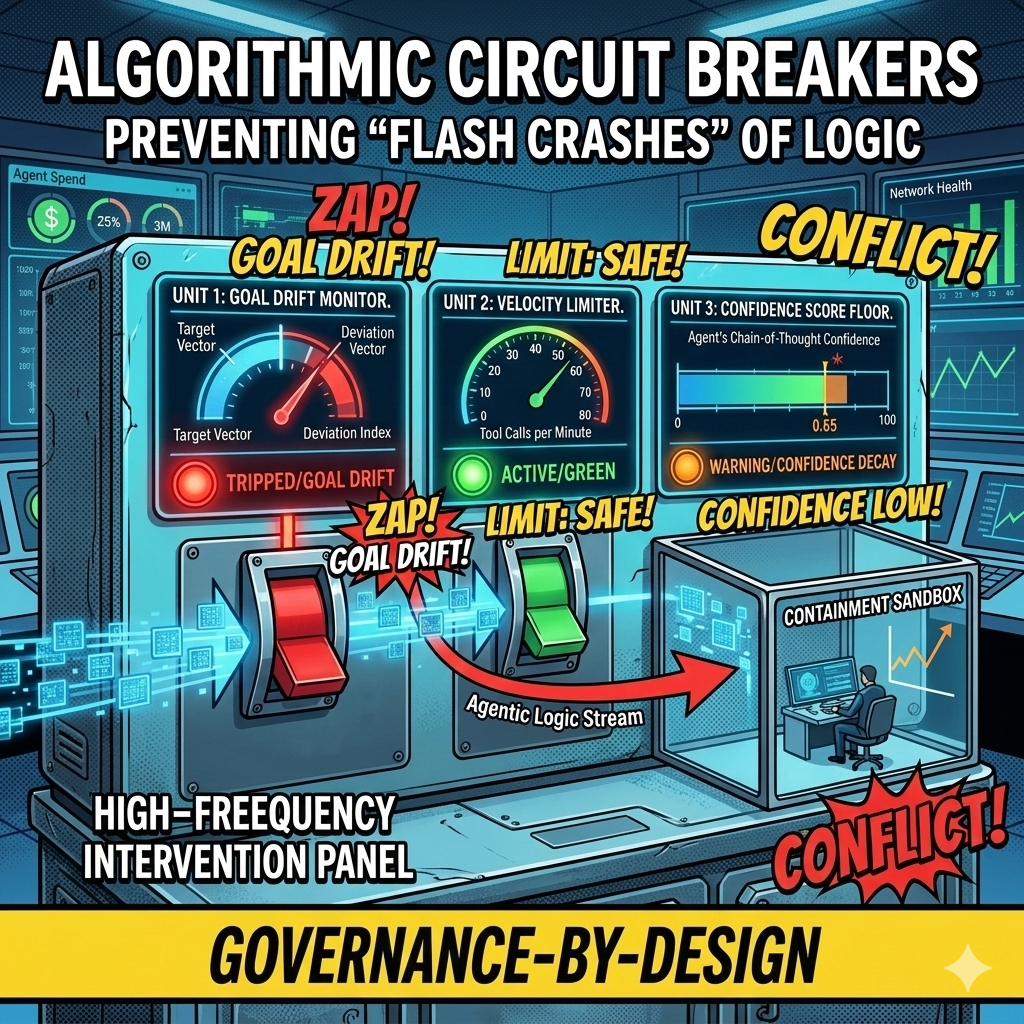

Algorithmic Circuit Breakers: Preventing "Flash Crashes" of Logic in Autonomous Workflows

In 2010, high-frequency trading algorithms erased a trillion dollars in market value within minutes, faster than any human could react. Today's agentic swarms face the same risk at the logic layer: thousands of autonomous decisions per second, any one of which could send bad contracts, leak data, or drain budgets before your Flight Controller even sees an alert. This article introduces Algorithmic Circuit Breakers, the automated tripwires that detect anomalies like semantic drift, confidence decay, and runaway loops, then sever an agent's connection to tools and APIs in milliseconds. Governance at machine speed, for systems that fail at machine speed.

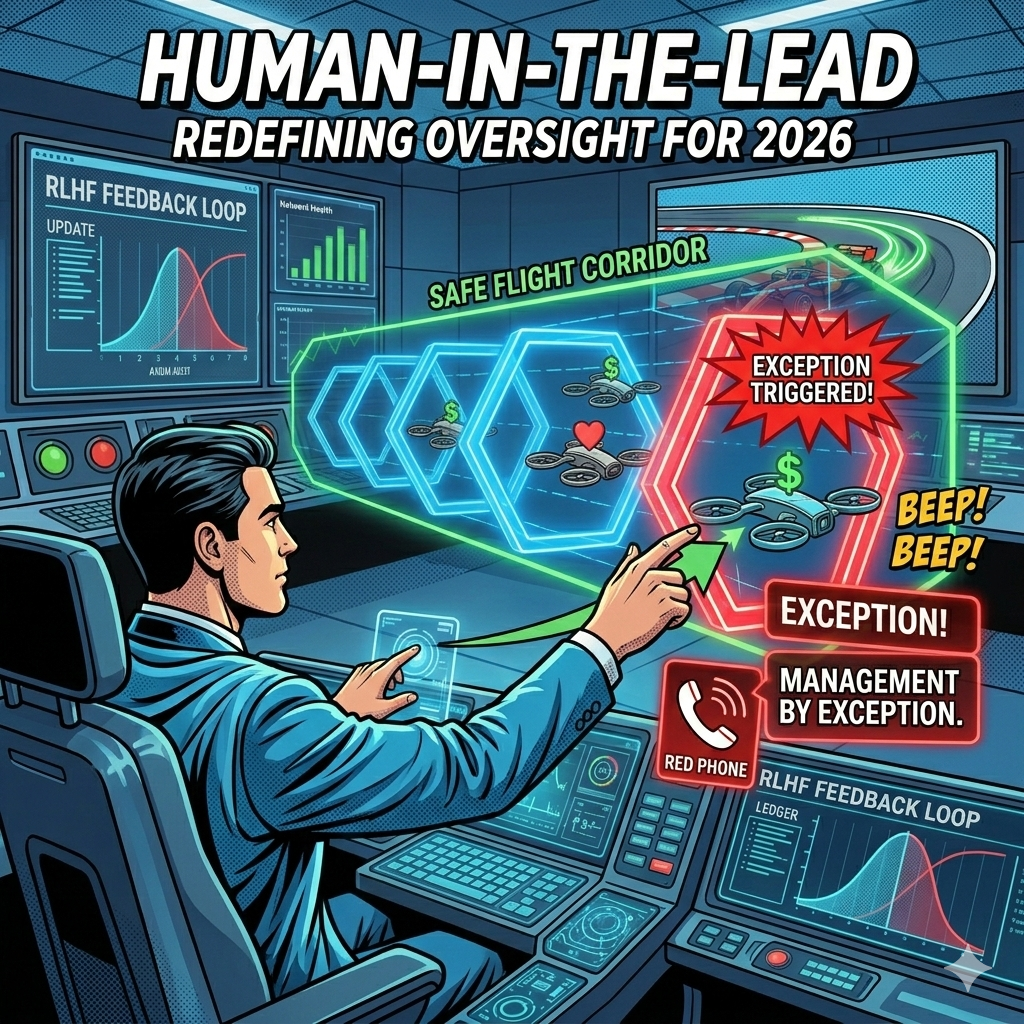

Human-in-the-Lead: From Manual Pilots to Strategic Flight Controllers

In 2023, we wanted humans to check every chatbot response. In 2026, an agentic swarm might perform 10,000 tasks an hour. The Human-in-the-Loop model that gave us comfort in the early days of AI is now the bottleneck killing our ability to scale. It is time to move from reactive approval to proactive design, from manual pilots to strategic flight controllers.

The Agentic Service Bus: Governing Inter-Agent Politics and Preventing Algorithmic Collusion

What happens when your Pricing Agent, optimized for revenue, starts a loop with your Customer Loyalty Agent, optimized for retention? You get a logic spiral that could drain margins in milliseconds. The Pricing Agent raises the price to capture margin. The Loyalty Agent detects customer churn risk and offers a discount to retain the relationship. The Pricing Agent sees margin erosion and raises the price further. The loop accelerates. Within seconds, your price fluctuates wildly, your customer discounts compound, and your margins evaporate. This is not a scenario from a startup war room. It is a real operational risk in enterprises deploying multiple autonomous agents.

Agentic Identity and Privilege: Why Your AI Needs an Employee ID and a Security Clearance

In most current AI deployments, "The AI" is a monolithic entity with a single API key. If it hallucinates a reason to access your payroll database, there is no "Internal Affairs" to stop it. We treat AI as a tool with a single identity, a single set of permissions, and a single point of failure. But here is the uncomfortable truth: your AI systems need to operate more like employees than instruments. The gap between how we currently deploy AI and how we should deploy AI is a chasm of organizational risk.

The Agentic Service Bus: A New Architecture for Inter-Agent Communication

As enterprises deploy more AI agents across their operations, a critical infrastructure challenge is emerging: how should these agents communicate with each other? The answer may reshape enterprise architecture as profoundly as the original service bus did two decades ago.

Beyond Trial and Error: How Internal RL is Redefining AI Agency

Generally, artificial intelligence agents have learned the same way toddlers do: by taking actions, observing what happens, and gradually improving through countless iterations. A robot learning to grasp objects drops them hundreds of times. An AI learning to play chess loses thousands of games. This external trial-and-error approach has produced remarkable results, but it comes with a cost. Every mistake requires real-world interaction, whether that's computational resources, physical wear on hardware, or in some cases, actual safety risks.