Human-in-the-Lead: From Manual Pilots to Strategic Flight Controllers

The "Latency of Liberty": Why Human-in-the-Loop Is Dead

In 2023, we wanted humans to check every chatbot response. In 2026, an agentic swarm might perform 10,000 tasks an hour. This simple truth exposes a critical flaw in the Human-in-the-Loop paradigm that dominated the early era of agentic systems. The architecture that felt safe and controlled at small scale collapses spectacularly when you apply it to real-world agent populations.

The Bottleneck: Human-in-the-loop creates a clutch that is constantly slipping. If the human has to approve every step, you do not have an agent; you have a very expensive, slow intern. The approval overhead grows exponentially as agents scale. A single human cannot cognitively process thousands of requests per hour. A procurement agent processing vendor evaluations cannot wait for human sign-off on every decision. The entire point of automation vanishes the moment you bolt a human-approval gate onto every transaction. You have not scaled; you have only made the problem worse. The human is now the system bottleneck, the point of inevitable failure.

We are moving from Human-in-the-Loop (Reactive) to Human-in-the-Lead (Proactive Design). This shift changes everything about how humans interact with their agents. The human stops reviewing outputs and starts designing constraints. Instead of firefighting after the agent acts, the human sets the rules before the agent moves. This is not a small change in operational practice. This inverts the governance model itself. The human moves upstream in the decision pipeline, where influence is high and effort is low. Reactive approval is replaced with proactive constraint design.

Consider a procurement agent that processes 500 vendor evaluations per hour. Under Human-in-the-Loop, a human must approve each one. That creates an instant bottleneck that defeats the entire purpose of automation. The agent cannot move faster than the human can click. Decisions pile up. Risk increases. Cycles lengthen. Under Human-in-the-Lead, the human defines the evaluation criteria, the scoring weights, the disqualification thresholds, and the escalation rules before the agent starts its work. The agent then executes autonomously within those boundaries. It makes thousands of decisions per day, all aligned with the human's intent, all governed by the constraints the human designed. Governance shifts from output-reactive to design-proactive. Speed scales. Consistency improves. And the human's time is reclaimed for actual strategy instead of being consumed by the tyranny of approval.

The "Flight Controller" Model

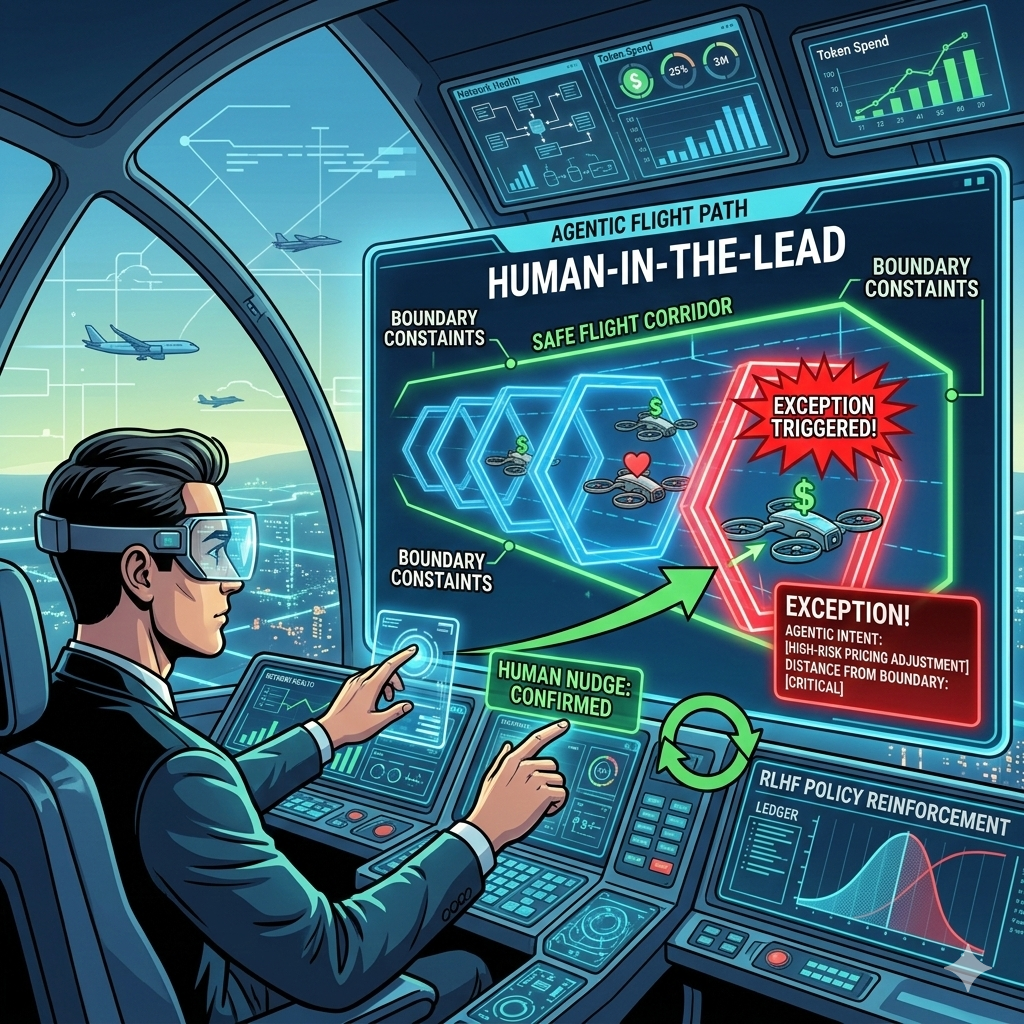

Just as an Air Traffic Controller does not fly the planes but sets the corridors, altitudes, and headings, the AI Executive sets the Vector Space Boundaries. This is not a metaphor. It is the operational reality of modern agentic governance. The ATC system works at massive scale precisely because humans do not try to micromanage every aircraft. They set the structure. The pilots operate within it. Scale emerges from this separation of concerns.

Setting the flight path means defining two critical inputs: the Goal State and the Constraint Set. Humans define where the agent can go, how fast it can move, and what it must avoid. These are the Foundations from Article 2. They establish the Green Zone of permissible intent. The agent navigates autonomously within that zone. The human does not manage throttle and flaps; the human sets altitude, heading, corridor boundaries, and airspace restrictions. The pilot knows the lanes. The pilot knows the rules. The pilot flies the mission.

The agents fly the mission autonomously as long as they stay within the Green Zone of the governance architecture. The multi-axis coordinate system from Article 3 allows the Semantic Interceptor to measure intent in real time. As long as the agent's intent vector stays within the bounded region, execution is unsupervised. The human does not see logs, alerts, or prompts. The system simply works. This is the promise of Governance-by-Design. You do not need constant surveillance. You need clear, enforceable boundaries that keep the agent honest and on course.

Air Traffic Controllers do not micromanage each plane's throttle or flaps. They do not require call-in approval for every altitude change. They do not read the pilot's logs on every descent. They set corridors, assign headings, manage separation, and intervene only when separation is violated or weather changes. They trust the system and the training. This is exactly how Human-in-the-Lead works for agents. The human sets the lane. The agent drives within it. Intervention happens only at boundaries. The human sleeps soundly because the architecture does the work of staying safe.

Management by Exception: The "Red Phone" Strategy

The system only pages the human when an Exception occurs. Three categories of exception triggers exist:

The Semantic Interceptor detects an intent that is 50/50 on a boundary.

The Arbiter Agent cannot resolve a conflict between two agents.

A Black Swan event occurs that falls outside the trained vector space entirely.

When any of these conditions occur, a human notification is triggered. The human does not monitor 10,000 log entries or watch dashboards obsessively. Instead, the human views a Heat Map of agentic intent, showing where agents are operating relative to their boundaries. Green means safe and autonomous. Yellow means drifting toward a boundary. Red means intervention required now. This visual abstraction collapses noise into signal.

The human spends time on yellow and red, not green. This reframes the entire relationship with oversight. The human is not managing operations; the human is managing risk. Compare the old model: reading 10,000 log entries, parsing narrative events, hunting for patterns, building mental models of drift, and guessing where intervention is needed. The human is exhausted and reactive, always chasing yesterday's problem. Now compare the new model: glancing at a heat map that shows three yellow alerts and one red flag. The human knows exactly where to look. The human has high confidence in the urgency level. The human can act strategically instead of tactically. Which one allows you to scale? Which one preserves human sanity?

Created with Google Nano Banana Pro

Training the "Safety Substrate"

The human's role shifts to RLHF (Reinforcement Learning from Human Feedback) on Policy, not on individual outputs. The human is no longer correcting one bad response at a time. The human is no longer firefighting individual errors. The human is tuning the governance model itself. This shift in focus changes what human feedback means in an agentic system. Every intervention becomes a signal to the system about its own boundaries.

When a human resolves an Exception, that decision is instantly encoded back into the Logit Warping weights from Article 3, making the system smarter for the next flight. Every human intervention is a training signal. Over time, the number of exceptions decreases as the governance substrate absorbs the human's judgment. The system learns not from a static training set but from live operational feedback, adapting in real time to the human's risk tolerance and values. The agent improves faster than any offline training because the learning is rooted in the actual decisions the human makes.

Imagine a procurement agent scoring vendors at a critical threshold. The human resolves a boundary case where the evaluation is exactly at the decision line. The vendor scored 50/50 on the evaluation matrix. The human approves the vendor based on a relationship factor the model did not capture. That decision updates the scoring model so similar borderline cases are handled automatically next time. The system learns from the exception. The governance substrate has absorbed one more increment of human judgment. The next time a boundary case appears with similar characteristics, the system makes the call. The human never sees that pattern again. Risk improves. Decisions accelerate. The human has trained the system without writing a single line of code.

Reclaiming the Strategic High Ground

The Ferrari metaphor evolved. The human is not the brakes; the human is the Navigator. You decide where the car is going. You set the destination and the constraints on the journey. The Governance-by-Design architecture ensures you get there without crashing. You are in the lead, charting the path forward. The agent executes the navigation, staying true to your intent and within your boundaries. Speed and direction are human choices. Safety and consistency are architectural properties.

Being in the lead means you spend 5 percent of your time on oversight and 95 percent on strategy, rather than the inverse. You are no longer babysitting your AI. You are directing it. You are no longer approving its work. You are designing the space in which it works. This is the Executive Bottom Line: Human-in-the-Lead works because it respects the scarcity of human attention while unlocking the scaling potential of agentic autonomy. The human moves from the slowest link in the chain to the strategic director of the system.

The Semantic Interceptor, the Identity Gateway, the Agentic Service Bus, and now the Human-in-the-Lead model compose into a complete governance stack. Each article in this series builds a layer. The foundations establish how agents can speak, who can speak, where messages flow, and now how humans stay in strategic control without drowning in approval overhead. The next article will bring all these components together into a unified reference architecture, showing how Human-in-the-Lead fits with the foundational systems that make safe, autonomous agency possible at scale. Until then, recognize this truth: the agents have not come to replace you. They have come to free you from the work that buries you.