Governance as a Competitive Advantage: Why the Safest Companies Will Be the Fastest

Most companies treat AI governance as a speed limit. They are wrong. In this closing article of the Agentic Governance-by-Design series, we argue that the organizations with the best brakes will be the ones who drive fastest, introducing the concept of Time-to-Trust and showing why governed companies are escaping Pilot Purgatory while their competitors are still crawling.

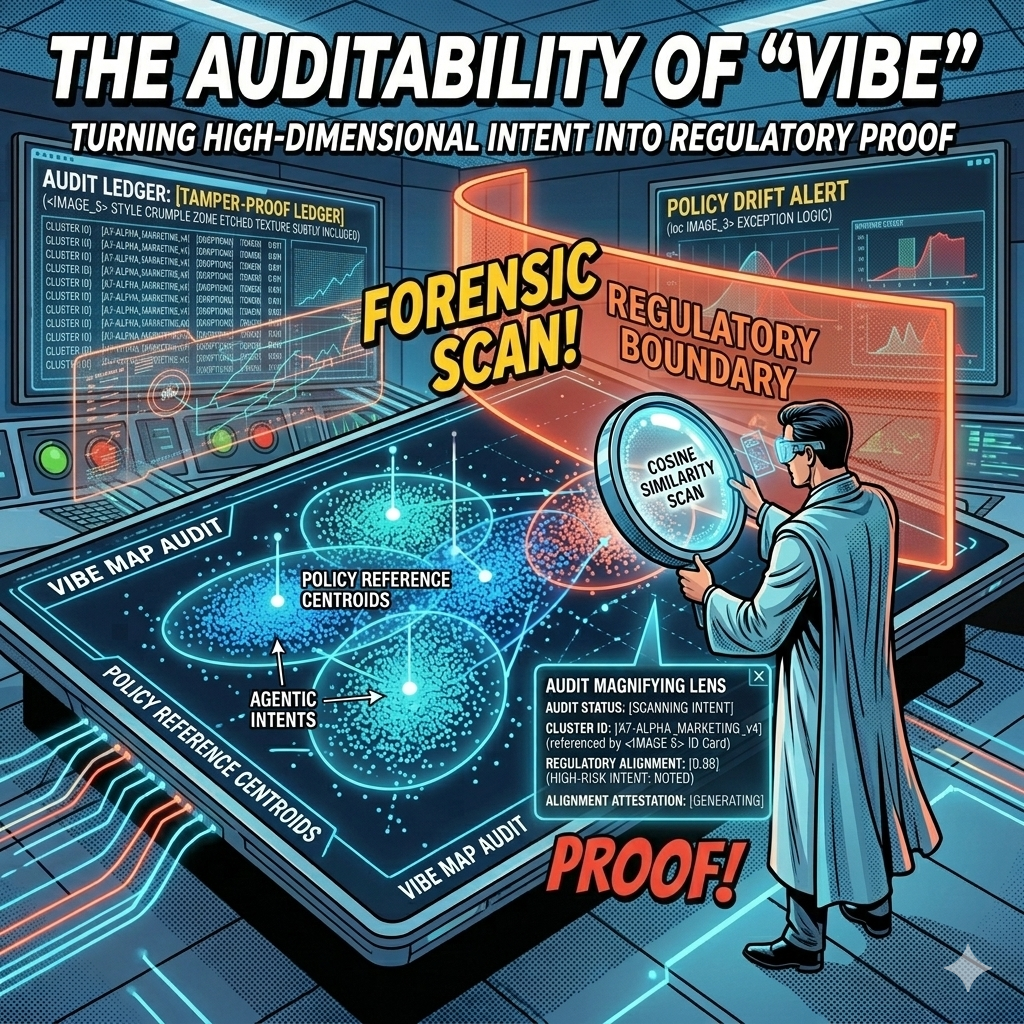

The Auditability of "Vibe": Turning High-Dimensional Intent into Regulatory Proof

Every AI decision your company makes leaves a mathematical fingerprint. The question is whether you're capturing it. In this article, we explore how vector embeddings and governance ledgers transform the "black box" problem into geometric proof, giving boards, regulators, and courts the auditable evidence they need to trust agentic AI at enterprise scale.

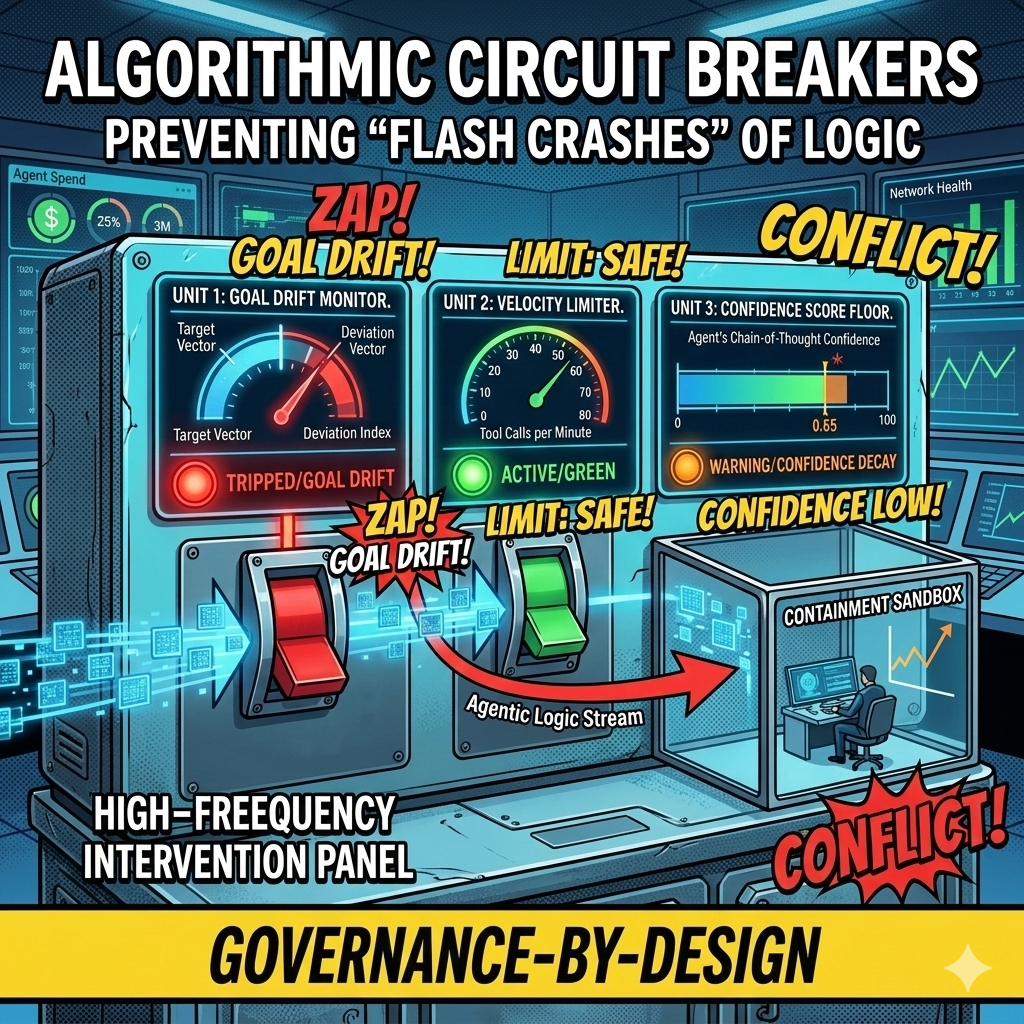

Algorithmic Circuit Breakers: Preventing "Flash Crashes" of Logic in Autonomous Workflows

In 2010, high-frequency trading algorithms erased a trillion dollars in market value within minutes, faster than any human could react. Today's agentic swarms face the same risk at the logic layer: thousands of autonomous decisions per second, any one of which could send bad contracts, leak data, or drain budgets before your Flight Controller even sees an alert. This article introduces Algorithmic Circuit Breakers, the automated tripwires that detect anomalies like semantic drift, confidence decay, and runaway loops, then sever an agent's connection to tools and APIs in milliseconds. Governance at machine speed, for systems that fail at machine speed.

Human-in-the-Lead: From Manual Pilots to Strategic Flight Controllers

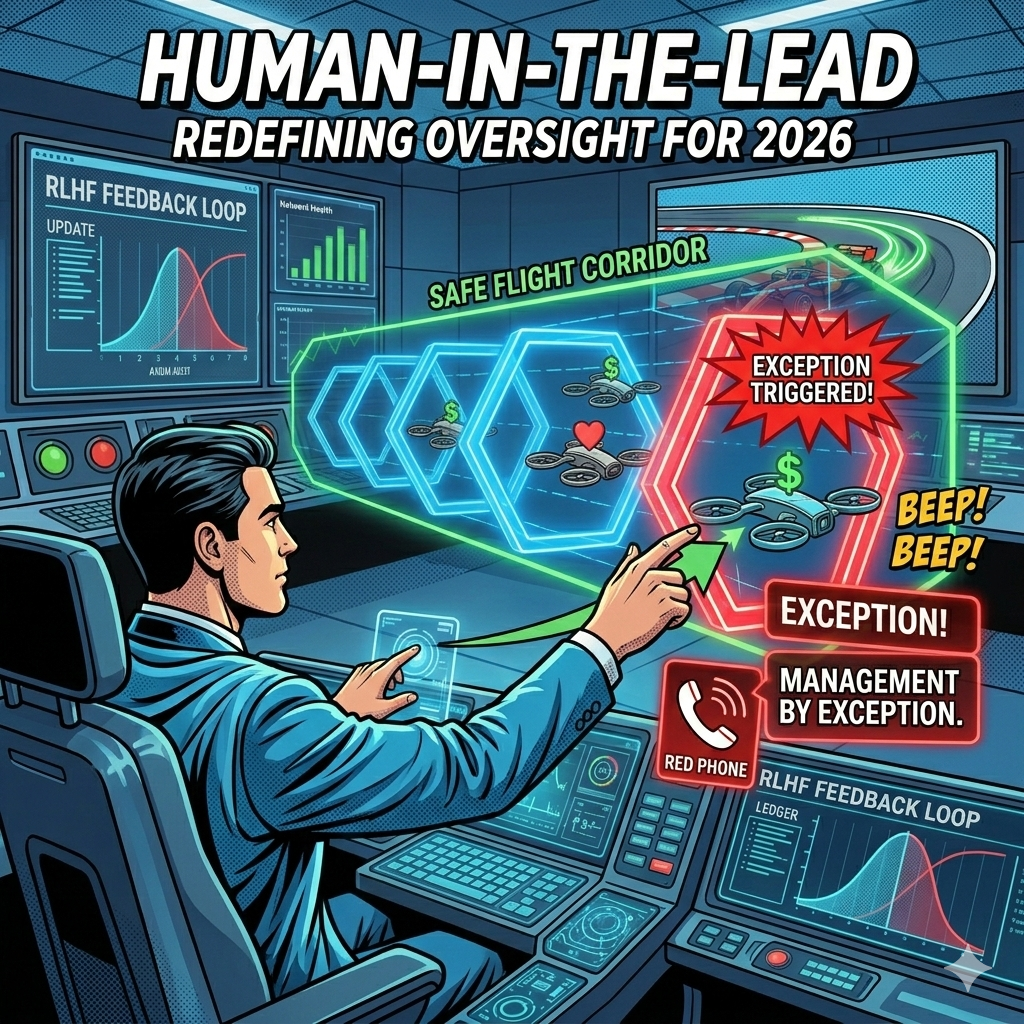

In 2023, we wanted humans to check every chatbot response. In 2026, an agentic swarm might perform 10,000 tasks an hour. The Human-in-the-Loop model that gave us comfort in the early days of AI is now the bottleneck killing our ability to scale. It is time to move from reactive approval to proactive design, from manual pilots to strategic flight controllers.

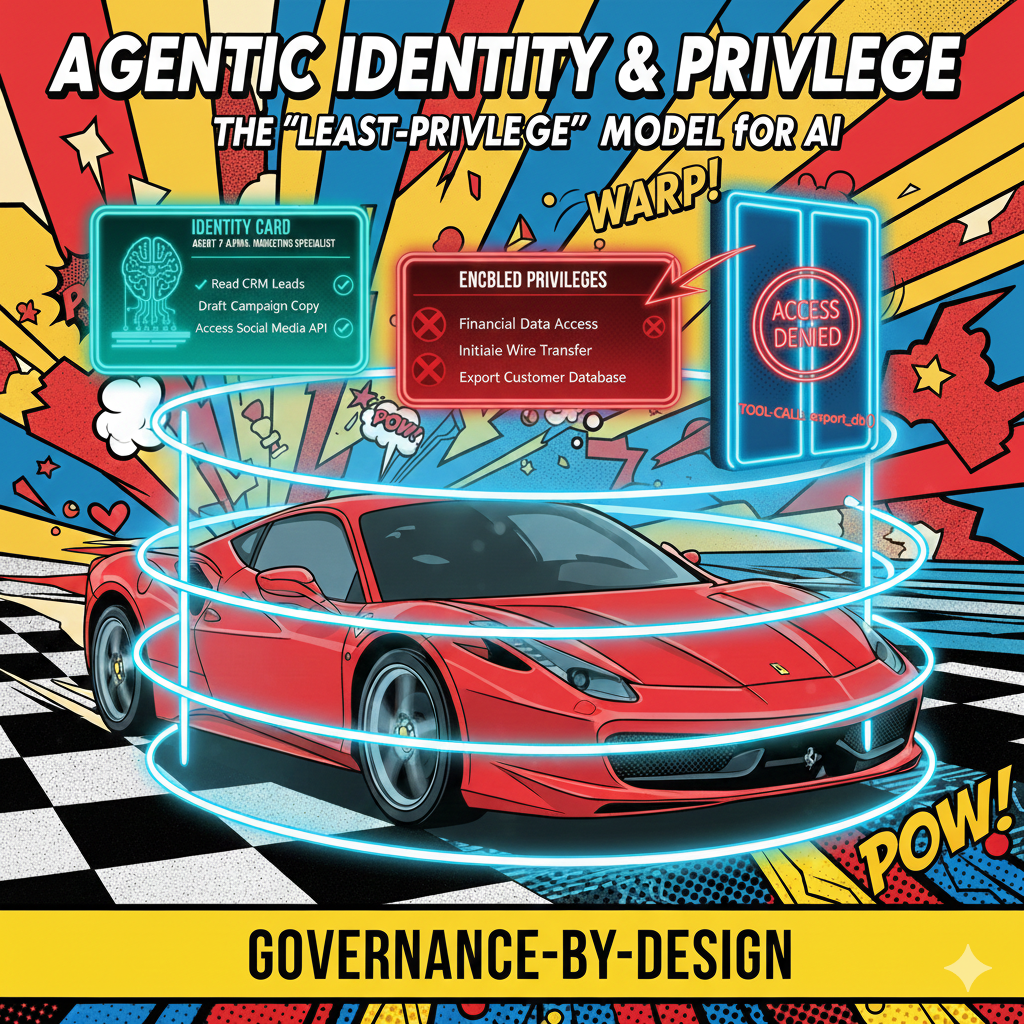

Agentic Identity and Privilege: Why Your AI Needs an Employee ID and a Security Clearance

In most current AI deployments, "The AI" is a monolithic entity with a single API key. If it hallucinates a reason to access your payroll database, there is no "Internal Affairs" to stop it. We treat AI as a tool with a single identity, a single set of permissions, and a single point of failure. But here is the uncomfortable truth: your AI systems need to operate more like employees than instruments. The gap between how we currently deploy AI and how we should deploy AI is a chasm of organizational risk.

From "Human-in-the-Loop" to "Human-in-the-Lead": Designing Agency for Trust, Not Just Automation

If we want to scale agentic AI, we need a different model. We must stop treating humans as safety nets reacting to AI outputs and start treating them as pilots directing AI capabilities. This is the shift from "Human-in-the-Loop" to "Human-in-the-Lead."

The Missing Layer: Why Enterprise Agents Need a "System of Agency"

We are witnessing a critical transition in artificial intelligence. The move from Generative AI (which creates content) to Agentic AI (which executes tasks) changes everything about how organizations must approach their AI infrastructure.

Most organizations are attempting to build autonomous agents on top of their existing "Systems of Record”; ERPs, CRMs, and legacy databases designed decades ago. These systems excel at storing state: inventory levels, customer records, transaction histories. But they were never designed to capture something equally critical: the reasoning behind decisions.

Conflict Resolution Playbook: How Agentic AI Systems Detect, Negotiate, and Resolve Disputes at Scale

When you deploy dozens or hundreds of AI agents across your organization, you're not just automating tasks. You're creating a digital workforce with its own internal politics, competing priorities, and inevitable disputes. The question isn't whether your agents will come into conflict. The question is whether you've designed a system that can resolve those conflicts without grinding to a halt or escalating to human intervention every time.

Beyond Bottlenecks: Dynamic Governance for AI Systems

As we move from single Large Language Models to Multi-Agent Systems (MAS), we're discovering that intelligence alone doesn't scale. The real challenge is coordination, orchestration and governance. Imagine you've deployed 100 autonomous agents into your enterprise. One specializes in customer data analysis. Another handles inventory optimization. A third manages supplier communications. Each agent is competent at its job. But when a supply chain disruption hits, who decides which agents act first? When two agents need the same resource, who arbitrates? When market conditions shift, how do they reorganize without human intervention?

The Impact of Bad Data on Modern AI Projects (and How to Fix It)

The enterprise AI conversation has been dominated by models. Which LLM should we license? Should we fine-tune or use RAG? What about open-source versus proprietary? These are the wrong questions to start with.

The AI boom is exposing a truth that data teams have known for years: most organizations are building on a foundation of poor-quality data. Decades of neglected data strategy are now coming due. The models are powerful, but they're only as reliable as what they're trained on and what they retrieve.

Governance by Design: Embedding Ethical Guardrails Directly into Agentic AI Architectures

As artificial intelligence systems gain increasing levels of autonomy, the traditional approach of adding compliance measures after deployment is proving inadequate. We need a new approach: Governance by Design; a proactive methodology that weaves ethical guardrails directly into the fabric of AI architectures from the ground up.