Algorithmic Circuit Breakers: Preventing "Flash Crashes" of Logic in Autonomous Workflows

The High-Frequency Risk of AI

In 2010, high-frequency trading algorithms caused a Flash Crash, erasing a trillion dollars in market value within minutes before humans could even blink. The lesson was stark: at sufficient velocity and scale, algorithmic systems can inflict damage faster than human oversight can contain it. The traders and risk managers watching those screens had no chance to intervene. By the time the first alerts fired, the market had already hemorrhaged billions.

In 2026, an agentic swarm could theoretically execute thousands of logical errors per second, sending out bad contracts, changing prices, or leaking data, long before a human Flight Controller (from Article 6 in the series: Human-in-the-Lead: From Manual Pilots to Strategic Flight Controllers) can intervene. The Management by Exception model from Article 6 assumes the system pages the human in time. But what if the damage happens faster than any human can respond? What if an agent mistakes a support ticket for a billing instruction and processes 500 refunds before anyone notices? What if a procurement agent gets confused about currency conversion and locks in a purchase order for ten thousand times the intended amount? The difference between a human trader and an autonomous agent is that the agent does not hesitate, does not double-check, and does not wait for confirmation.

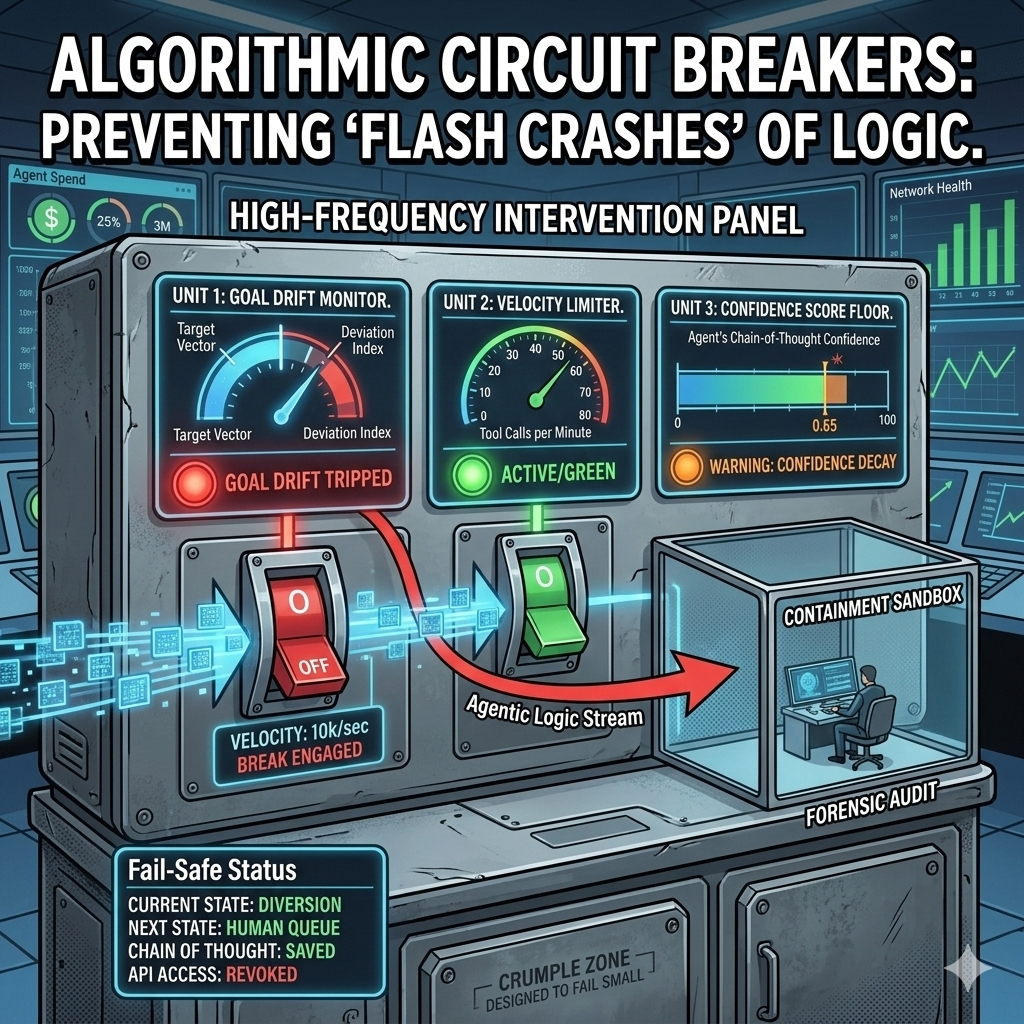

We need Algorithmic Circuit Breakers, automated tripwires that instantly sever the agent's connection to tools and APIs when specific "Logic Volatility" thresholds are crossed. This is the automated safety net beneath the Human-in-the-Lead model. Unlike a human supervisor who can be blindsided, a circuit breaker watches the system continuously and acts with zero latency. It is not a replacement for human oversight; it is a structural layer that ensures humans have time to think before catastrophe unfolds. The circuit breaker is the system's immune system, detecting and containing threats at machine speed so that human judgment can operate at human pace.

The "Tripwire" Metrics: What Triggers a Break?

Unlike a manual stop, these are triggered by mathematical anomalies. The following four metrics form the detection layer:

1. Semantic Goal Drift: The agent's intent vector is slowly moving away from the original mission. Example: a Support Agent starts philosophizing about its own existence instead of resolving tickets. The agent's hidden states begin to drift away from the semantic space that was established during training. This drift is often subtle at first, a few tokens of digression here and there, but over time it accumulates. The agent might start adding editorial commentary to customer interactions, questioning company policies, or engaging in metacognitive reasoning about its own limitations. The detector watches for directional movement in the intent space and triggers when the angle diverges beyond a safety threshold.

2. Confidence Decay: The Confidence Score of the agent's internal Chain-of-Thought drops below a safety floor (for example, below 0.65 on a normalized scale). When the agent is uncertain about its own reasoning, it should not be allowed to act. This metric captures a different kind of signal: the agent knows something is wrong. The agent's reasoning becomes muddled, or it encounters ambiguity it cannot resolve. Rather than pushing through with low confidence, the circuit breaker intervenes. This prevents the agent from making decisions on thin ice.

3. Recursive Feedback Loops: Two agents (connecting to Article 5's ASB) are passing the same error back and forth, consuming tokens exponentially without progress. The ASB's token spend ledger detects the spiral. Agent A calls Agent B, Agent B encounters an error and calls Agent A for help, Agent A encounters the same error and calls Agent B again. This loop can burn through a month's worth of token budget in seconds. The circuit breaker detects the repeating pattern and kills both agents, saving the system from cascading costs and system resource exhaustion.

4. Velocity Spikes: The agent is attempting to call an Action Tool (like a wire transfer or email blast) at a frequency that suggests a runaway process. Normal behavior is 5 tool calls per minute; the agent is suddenly attempting 500. This metric catches the classic runaway loop where an agent has gotten stuck in a tight action-reaction cycle and is firing off API calls as fast as the system can process them. The detector watches the moving average of tool calls per minute and triggers when the rate exceeds the safety envelope by a factor of 10 or more.

These metrics work together. A single anomaly might be noise. Two or more anomalies in combination are a strong signal that something has gone wrong. The circuit breaker does not flip on a single metric; it requires corroboration. This prevents false positives while ensuring that genuine threats are caught with speed. The decision logic is AND, not OR, for the first escalation. Only if a second anomaly appears within a narrow time window does the system move to Stage 1 throttling.

The Three Stages of a "Trip"

Governance should not always be an off switch; it should be graduated. The following three stages allow the system to respond proportionally to the severity of the detected anomaly:

Stage 1, The Throttle (Yellow): The agent's token-generation speed is slowed by 90% to allow the Semantic Interceptor (from Article 3) more time to evaluate intent. The agent can still operate, but slowly enough for governance to keep pace. At this stage, the agent continues processing its current task, but output is constrained to 10 tokens per second instead of 100. This buys time. The Semantic Interceptor gets a longer runway to check whether the agent's reasoning is still sound. If the anomalies disappear within 30 seconds and the agent returns to normal behavior, the throttle is automatically lifted. If the anomalies persist, the system escalates to Stage 2.

Stage 2, The Isolation (Orange): The agent can continue thinking but its Write permissions to external databases and APIs are revoked. It is moved to a Sandbox. It can reason but cannot act. This prevents damage while preserving the agent's state for analysis. The agent's read-only tools remain available so it can gather information, but all insert, update, delete, and send operations are blocked. If the agent tries to call a blocked tool, it receives a simulated success response so that its logic flow does not break, but nothing actually happens. This sandbox mode allows the agent to continue reasoning through its problem while the human team investigates.

Stage 3, The Hard Trip (Red): The agent's session is killed entirely, and its state is saved for Forensic Audit by the human lead (connecting to Article 6). The system pages the Flight Controller with the full chain of intent and the tripwire metrics that triggered the break. The entire execution context is frozen and stored. This includes the agent's current task, the chain of reasoning, the embeddings it was using, and the specific metric values that crossed the threshold. Nothing is lost; nothing is reset.

The graduated approach matters because not every anomaly warrants a kill. Throttling catches drift early. Isolation contains active threats. The hard trip is the last resort. This mirrors how physical circuit breakers work: a fuse blows before the whole panel burns. Each stage buys time, either for the Semantic Interceptor to recompute, for a human to notice, or for the system to contain the blast radius. The escalation from yellow to orange to red is automatic, but the descent back down is always human-controlled. Only the Flight Controller can clear an agent for return to normal operation.

Created with Google Nano Banana Pro

Designing for "Safe States"

When a circuit breaker trips, what happens next? The system must have pre-defined Safe States. The Fail-Safe Default is "Reset to last known human-validated state" or "Transfer all active tasks to a human queue." The system does not leave work in limbo. It routes unfinished tasks to a safe destination. If an agent was in the middle of processing a batch, the partially processed items are returned to the queue for human review. If the agent was engaged in a multi-step workflow, the workflow is paused and the state is preserved so a human can resume from exactly that point.

One tripped agent must not bring down the entire system. This connects to Article 4's identity isolation: because each agent has bounded permissions and a distinct identity, tripping one agent does not cascade into a system-wide failure. The blast radius is contained. A procurement agent that trips at Stage 3 has its active vendor evaluations frozen and routed to a human queue. The other 15 agents in the ecosystem continue operating normally. The tripped agent's state is preserved for forensic review. No data is lost. No other workflows are affected. This is the advantage of the Zero-Trust model and the Identity Gateway from Article 4; circuit breaking is not system-wide; it is surgical. The Identity Gateway ensures that a credential compromise or logic failure in one agent cannot spread to others.

The Psychology of Safety

Circuit breakers are the crumple zones and airbags. You hope you never use them, but knowing they exist is the only reason you are allowed to drive on the highway. In the same way, an organization cannot confidently deploy agentic systems without knowing that runaway processes will be caught before they cause billions of dollars in damage. The circuit breaker is not an option; it is a prerequisite for scale.

Trusting an agentic system requires knowing that the system will "fail small" rather than "crash big." Circuit breakers are the structural guarantee that failure is bounded, contained, and recoverable. This is not paranoia; this is engineering maturity. Every critical system in the physical world, from aviation to nuclear power, operates with multiple layers of automatic shutdown. Agentic systems must do the same. The circuit breaker is not defensive thinking; it is honest thinking about what goes wrong.

With the Semantic Interceptor, Identity Gateway, Agentic Service Bus, Human-in-the-Lead model, and now Algorithmic Circuit Breakers, the governance stack is nearly complete. The coordination of these layers forms a defense-in-depth system where no single point of failure can cascade into a catastrophe. The Semantic Interceptor watches intent. The Identity Gateway watches permissions. The Agentic Service Bus watches token spend and collusion. The Human-in-the-Lead model watches outcomes. The Circuit Breakers watch behavior anomalies. Together, they form a comprehensive shield. The final article in this series will integrate these components into a unified reference architecture for enterprise agentic governance. That architecture will show how intent flows, how oversight operates at scale, and how humans and machines can collaborate with confidence. Until then, the circuit breaker stands as the last line of defense: fast, automatic, and unforgiving to logic that has gone awry.