Governance Beyond Compliance: What Agentic Governance Actually Requires

This is the fifth article in Arion Research's "Future Enterprise" series, exploring how AI agents are restructuring enterprise technology. The series examines the architectural layers, competitive dynamics, and strategic decisions that will define the next era of enterprise software.

Ask any enterprise software vendor whether they have governance for their AI agents, and the answer is almost always yes. They will point to role-based access controls, audit logs, compliance dashboards, and security monitoring. They will describe guardrails that prevent agents from accessing unauthorized data or executing prohibited actions. They will reference their SOC 2 certification and their alignment with NIST frameworks.

All of that is necessary. None of it is sufficient.

The problem is not that enterprises lack security and compliance controls for their agents. The problem is that they are treating security and compliance as if they were governance. They are not the same thing. Security answers the question: is this agent protected from threats? Compliance answers the question: does this agent meet regulatory requirements? Governance answers a broader set of questions: should this agent be taking this action, under these circumstances, with this level of autonomy, given the current business context, risk posture, and organizational policy? Security and compliance are components of governance. They are not substitutes for it.

Throughout this series, I have identified governance as one of the critical vertical services in the Future Enterprise architecture, a service that spans the Enterprise Platform, the Agentic Platform, and the Collaboration layer. In the previous article, I examined why agentic identity is the prerequisite that most vendors are neglecting. Identity tells you who the agent is and what it is authorized to do. Governance tells you whether it should, right now, in this context, given everything the organization knows about risk, policy, and business priorities.

This article lays out what a purpose-built agentic governance architecture looks like: the layers, the capabilities, and the design principles that distinguish real governance from the security-plus-compliance approach that most vendors are offering today.

Why Security and Compliance Are Not Enough

To understand the governance gap, consider a concrete scenario. An enterprise procurement agent has proper security controls: it authenticates via the organization's identity provider, it has role-based access limited to procurement functions, and its actions are logged in an audit trail. It also meets compliance requirements: it operates within the organization's data residency rules, it follows the approval thresholds defined in corporate policy, and it generates the documentation required by auditors.

Now the agent encounters this situation: a supplier offers an accelerated delivery option at a 15% premium. The agent has the authority to approve the premium (it falls within its spending threshold). It has the access to modify the purchase order (it has the right role). The action is compliant (the premium is within policy limits and properly documented). But should it take the action?

The answer depends on context that security and compliance cannot evaluate. Is the organization under a cost-reduction mandate this quarter? Is this supplier on the risk watchlist for delivery reliability? Has the requesting department already exceeded its discretionary spending budget? Is there a competing purchase order from another department for the same supplier capacity? Did a similar accelerated delivery decision last month result in quality issues?

A human procurement manager would factor in all of this context. They would check with finance, consult the supplier relationship history, consider the broader organizational priorities, and make a judgment call. An agent operating within a security-and-compliance-only framework has no mechanism for any of this. It sees a valid action within its authorized scope and executes it. The action is secure, compliant, and wrong.

This is the governance gap. Security controls access. Compliance enforces rules. Governance exercises judgment. And as agents take on more autonomous, consequential actions, the absence of judgment becomes increasingly expensive.

The Five Layers of Agentic Governance

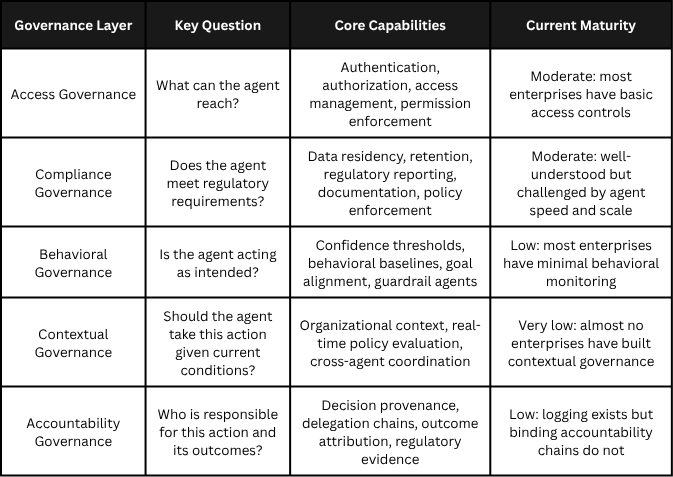

A purpose-built agentic governance architecture requires five distinct layers, each addressing a different aspect of how agents are managed, monitored, and directed. Most enterprises today have pieces of the first two layers. Almost none have built the remaining three.

Layer 1: Access Governance

This is the layer most enterprises have in some form. Access governance controls what agents can reach: which systems, which data, which APIs, which tools. It includes authentication (verifying the agent's identity), authorization (defining what the agent is permitted to do), and access management (enforcing those permissions at runtime).

Access governance is necessary but insufficient on its own for the same reason that giving an employee a building keycard does not constitute managing their work. Knowing what an agent can access tells you nothing about whether it should access those resources for a particular task, at a particular time, under particular circumstances. Access governance defines the boundaries. It does not govern the decisions made within those boundaries.

Layer 2: Compliance Governance

Compliance governance ensures that agent actions meet regulatory and policy requirements. It includes data residency enforcement, retention and deletion policies, regulatory reporting, industry-specific rules (financial services, healthcare, government), and documentation requirements for auditors.

This layer is well understood because it mirrors what enterprises already do for human workers and traditional software systems. The challenge with agents is scale and speed: an agent may execute thousands of compliance-relevant actions per day, and the compliance governance layer needs to evaluate each one without creating a performance bottleneck.

Singapore's IMDA Model AI Governance Framework for Agentic AI, released at Davos in January 2026, provides useful guidance here. It recommends that organizations assess and bound risks upfront by selecting appropriate use cases and placing limits on agents' autonomy, access to tools, and access to data. This is sound compliance governance. But it is still only one layer of what enterprises need.

Layer 3: Behavioral Governance

This is where most enterprise governance architectures have a significant gap. Behavioral governance monitors and constrains how agents act, not just what they can access or whether their actions are compliant. It addresses questions like: is the agent's decision-making pattern consistent with organizational intent? Is the agent exhibiting drift from its expected behavioral baseline? Is the agent's confidence level appropriate for the action it is about to take?

Behavioral governance requires several capabilities that most enterprises have not built:

Confidence thresholds. Agents should not treat all decisions equally. A procurement agent approving a routine reorder from an established vendor is a low-uncertainty decision. The same agent evaluating a new supplier in a market it has not operated in before is a high-uncertainty decision. Behavioral governance sets confidence thresholds that determine when an agent can act autonomously, when it should seek additional information, and when it must escalate to a human. The thresholds should be dynamic, adjusting based on the stakes, the novelty of the situation, and the agent's track record.

Behavioral baselines. Over time, each agent develops observable patterns: typical transaction sizes, common decision paths, characteristic interaction patterns. Behavioral governance establishes these baselines and flags deviations. This is different from security anomaly detection, which looks for malicious behavior. Behavioral governance looks for well-intentioned behavior that has drifted outside the organization's expectations, which is often a more subtle and more costly problem.

Goal alignment verification. Agents optimize for objectives. If those objectives are poorly specified, or if the agent's optimization produces unintended side effects, the results can be technically correct but organizationally harmful. A customer service agent optimizing for case resolution speed might close tickets prematurely. A scheduling agent optimizing for utilization might create unsustainable workloads. Behavioral governance continuously verifies that the agent's actions align with the organization's actual goals, not just the metrics the agent was given.

Guardrail agents. An emerging pattern is the deployment of specialized agents whose sole purpose is to monitor other agents. These guardrail agents evaluate inputs, outputs, and reasoning chains in real time, applying governance policies that would be too complex or too dynamic to encode as static rules. The guardrail agent pattern is promising but introduces its own governance question: who governs the guardrail agent?

Layer 4: Contextual Governance

Contextual governance brings organizational context into agent decision-making. This is the layer that addresses the procurement scenario I described earlier, where the action is authorized and compliant but does not account for broader business conditions.

Contextual governance requires that agents have access to (or can query) real-time organizational context: current budget status, strategic priorities, risk posture, related decisions being made elsewhere in the organization, and historical outcomes from similar decisions. It also requires a policy engine that can evaluate this context against organizational rules and preferences, not as a simple lookup but as a dynamic assessment that weighs multiple factors.

This is where governance connects to the architecture I described in the first article of this series. The Context and Memory vertical service in the Future Enterprise framework exists precisely to make this kind of contextual governance possible. Without a shared context layer that agents can query, each agent makes decisions in isolation, unaware of the broader organizational state. Contextual governance is the mechanism that turns a collection of independent agents into a coordinated organizational capability.

The California Management Review recently published research on the "Agentic Operating Model" that describes a similar concept, calling it the "Coordination Layer" that ensures agents do not make conflicting decisions or duplicate effort. Whether you call it contextual governance or coordination, the core requirement is the same: agents need organizational awareness, not just task awareness.

“Bounded Autonomy: The Graduated Authority Model

One of the most practical governance patterns emerging in enterprise deployments is graduated authority, sometimes called “bounded autonomy.” Rather than giving agents binary access (can do/cannot do), organizations define a spectrum of authority levels based on risk, stakes, and the agent’s demonstrated reliability.

A typical graduated authority model has three to four tiers. In the first tier, the agent can act autonomously on routine, low-risk decisions within well-established parameters. In the second tier, the agent can act but must notify a human of the action taken. In the third tier, the agent can recommend an action but must wait for human approval before executing. In the fourth tier, the agent must defer to a human entirely, providing analysis and options but making no recommendation.

The boundaries between tiers are not static. They can shift based on the agent’s track record (an agent with a strong history of good decisions in a domain earns more autonomy), the organizational context (during a cost-reduction quarter, spending thresholds for autonomous action might decrease), and external conditions (during a supply chain disruption, procurement agents might have their autonomy expanded to enable faster response).

This model mirrors how organizations already manage human authority. New employees start with limited discretion and earn more as they demonstrate competence. Agents should work the same way.”

Layer 5: Accountability Governance

The final layer connects agent actions to organizational responsibility. As I discussed in the previous article on agentic identity, accountability requires more than logging. It requires a binding chain that connects every agent action to the authorization that permitted it, the delegation that initiated it, the policy that governed it, and the human or organizational entity that is ultimately responsible.

Accountability governance addresses several specific requirements:

Decision provenance. For every significant agent action, the governance layer must capture not just what the agent did, but why it did it: what inputs it considered, what alternatives it evaluated, what confidence level it had, and what policies it applied. This provenance record must be tamper-evident and stored at a granularity that satisfies both internal audit requirements and external regulatory scrutiny.

Delegation chain tracking. When Agent A delegates a task to Agent B, and Agent B invokes a tool that triggers Agent C, the accountability governance layer must maintain the full chain. If Agent C produces an incorrect result, the organization needs to trace back through the delegation chain to understand where the error originated and who had oversight responsibility at each step.

Outcome attribution. When an agent's decision produces a measurable business outcome (positive or negative), the governance layer needs to attribute that outcome to the agent, the policy that governed it, and the human who authorized the agent's operation. This attribution feeds back into the behavioral governance layer, informing future confidence thresholds and authority levels.

Regulatory evidence. With California's AB 316 foreclosing the "AI did it" defense and the EU's Product Liability Directive classifying AI as a product subject to strict liability, accountability governance is not optional. The governance layer must produce evidence that meets legal standards of proof, not just internal reporting standards.

Design Principles for Agentic Governance

Building these five layers is a significant undertaking. Based on the analysis above and the patterns emerging across early enterprise deployments, I see six design principles that should guide the architecture:

Governance as infrastructure, not afterthought. Governance cannot be a feature bolted onto agent platforms after deployment. It needs to be an architectural layer that agents are designed to operate within from the start. This means governance APIs that agents call before taking actions, governance context that agents factor into decisions, and governance policies that are machine-readable and enforceable at runtime. The pattern is analogous to how modern applications are built with security as a design principle, not a retrofit.

Continuous, not periodic. Traditional governance operates on review cycles: quarterly audits, annual compliance assessments, periodic policy reviews. Agentic governance must be continuous. Agents make decisions in milliseconds, and governance evaluation needs to happen at the same speed. This does not mean every action requires a full governance assessment, but it does mean the governance layer must be able to evaluate risk and context in real time and intervene when necessary.

Adaptive, not static. Static governance rules cannot keep pace with the dynamic environments agents operate in. The governance architecture needs to adapt: adjusting authority levels based on agent performance, modifying thresholds based on organizational context, and evolving policies as the organization learns from agent outcomes. This requires a feedback loop between accountability governance (which captures outcomes) and behavioral governance (which sets parameters).

Cross-layer, not siloed. Governance must span the full Future Enterprise architecture. An agent's governance posture in the Enterprise Platform (where it accesses data) must be consistent with its governance posture in the Agentic Platform (where it reasons and acts) and the Collaboration layer (where it interacts with humans and other agents). Siloed governance, where each platform layer has its own governance framework, creates gaps at the boundaries.

Observable, not opaque. Every governance decision (and every governance failure) must be visible to the humans responsible for the agent's operation. This includes real-time dashboards showing agent activity and governance interventions, alerting systems for policy violations and behavioral anomalies, and reporting tools that give executives, auditors, and regulators the visibility they need. If you cannot see how your agents are being governed, you are not governing them.

Federated, not centralized. Large enterprises will not have a single governance authority for all agents. Business units, geographies, and functional domains will have different governance requirements. The governance architecture needs to support federation: a shared framework with common standards that allows distributed governance decisions. This mirrors how enterprises manage human governance today, with corporate policies that set the floor and business unit policies that add specificity.

The Organizational Dimension

Architecture alone is not enough. Agentic governance also requires organizational structures and processes that most enterprises have not yet created.

Agent oversight roles. Someone needs to be responsible for how agents operate within each business function. This is not the IT security team (they own access governance) and it is not the compliance team (they own compliance governance). Behavioral and contextual governance require business domain expertise. The emerging pattern is an "agent operations" function, sometimes embedded within business units and sometimes centralized, that owns the governance of agent behavior and decision quality.

Governance policy development. Machine-readable governance policies do not write themselves. Organizations need processes for translating business intent, risk tolerance, and organizational values into policies that agents can evaluate at runtime. This requires collaboration between business leaders (who define the intent), governance specialists (who translate intent into policy), and technical teams (who encode policies in machine-readable formats).

Incident response for agent failures. When an agent makes a bad decision, the organization needs a response process that goes beyond traditional IT incident management. Agent incidents require tracing the decision provenance, understanding the governance context at the time of the decision, determining whether the failure was a policy gap (the governance rules were incomplete), a behavioral drift (the agent operated outside its baseline), or a contextual failure (the agent lacked organizational awareness). Each root cause requires a different remediation.

Continuous learning loops. The most effective agentic governance architectures will not be the most rigid. They will be the ones that learn fastest. Every agent decision, every governance intervention, and every outcome feeds data back into the governance system. Over time, this data improves confidence thresholds, refines behavioral baselines, and sharpens contextual policies. Organizations that build these learning loops into their governance architecture will see their agent populations become more reliable and more autonomous over time. Organizations that rely on static rules will see their governance become increasingly brittle.

Getting Started: A Pragmatic Approach

Building a five-layer governance architecture from scratch is not realistic for most enterprises. Here is a pragmatic sequence:

Phase 1: Close the visibility gap. Before you can govern agents, you need to see them. The immediate priority for most enterprises is a complete inventory: how many agents are deployed, what authority do they have, what data can they access, and what decisions are they making. Research suggests that only about a quarter of organizations have full visibility into their agent landscape. Start there.

Phase 2: Implement graduated authority. Move from binary access controls to the bounded autonomy model. Classify agent decisions by risk level and implement the tiered authority model described in the sidebar. This gives you a practical framework for expanding agent autonomy safely as you build out the governance layers.

Phase 3: Build behavioral monitoring. Deploy the infrastructure to establish behavioral baselines and detect drift. This does not require a complete behavioral governance layer on day one. Start with the highest-risk agents and the highest-stakes decisions. Expand coverage as you learn what deviations matter.

Phase 4: Add organizational context. Connect your agents to the context they need: budget data, strategic priorities, risk signals, and the decisions being made by other agents. This is the hardest layer to build because it requires integration across organizational silos, but it is also where the governance architecture starts to produce real business value beyond risk mitigation.

Phase 5: Close the accountability loop. Implement the full provenance and attribution chain. This is where governance meets the regulatory requirements that are rapidly taking shape, with the EU AI Act's full enforcement for high-risk systems coming in August 2026 and California's AB 316 already in effect.

The enterprise software industry is at an inflection point. Agents are moving from experimental pilots to production workloads, and the governance infrastructure has not kept pace. The organizations that invest in purpose-built agentic governance now will have a structural advantage: their agents will be more reliable, more trusted, and more capable of taking on high-value work. The organizations that treat governance as a compliance checkbox will find their agent deployments stuck at low autonomy levels, unable to deliver the business value that justified the investment in the first place.

Security and compliance are necessary foundations. But they are the floor, not the ceiling. What enterprises need is governance that can exercise judgment at machine speed, adapt to changing context, and maintain accountability across increasingly complex agent ecosystems. Building that governance architecture is not glamorous work. It is, however, the work that will determine whether the agentic enterprise is trustworthy enough to scale.

Next in the series: "The Pricing Paradox" examining how AI agents are breaking enterprise software pricing models, why value-based pricing fails in practice, and what consumption-based alternatives are emerging.