Governance as a Competitive Advantage: Why the Safest Companies Will Be the Fastest

Most companies treat AI governance as a speed limit. They are wrong. In this closing article of the Agentic Governance-by-Design series, we argue that the organizations with the best brakes will be the ones who drive fastest, introducing the concept of Time-to-Trust and showing why governed companies are escaping Pilot Purgatory while their competitors are still crawling.

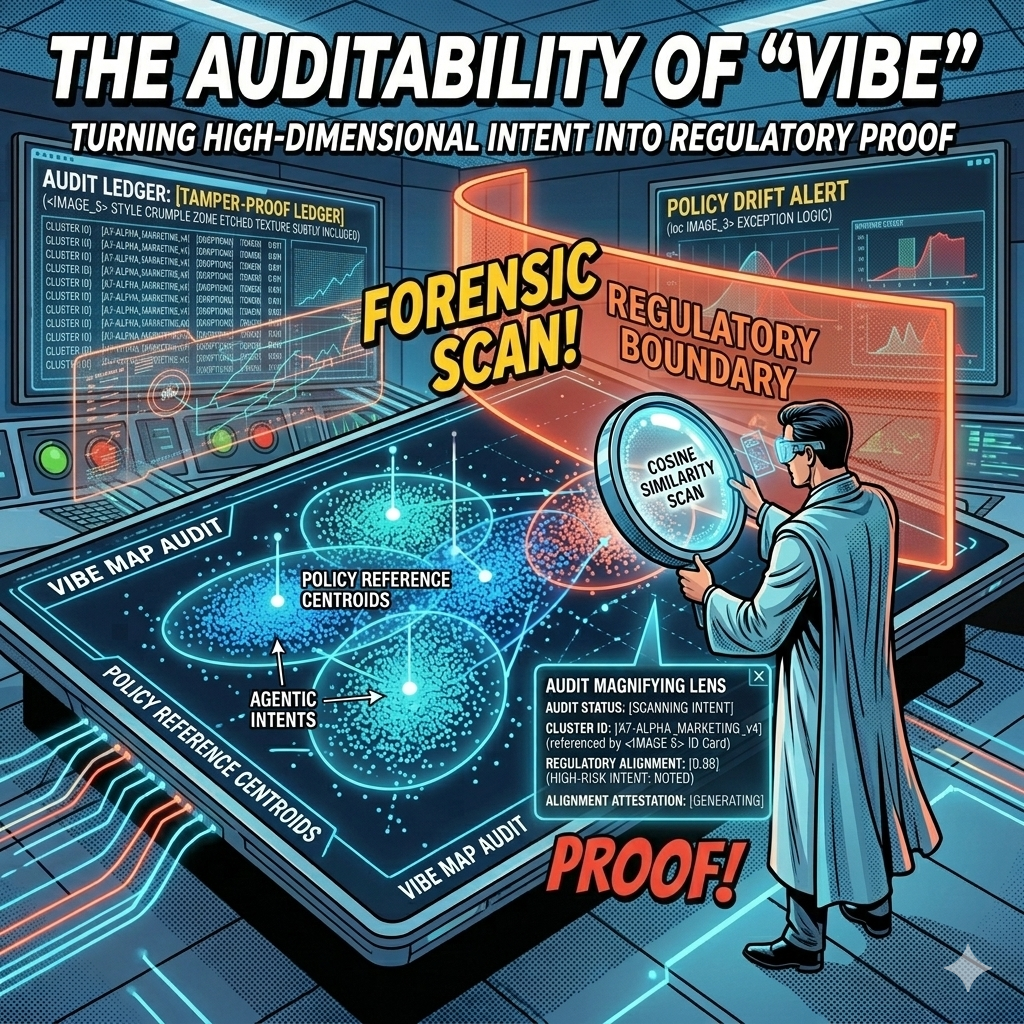

The Auditability of "Vibe": Turning High-Dimensional Intent into Regulatory Proof

Every AI decision your company makes leaves a mathematical fingerprint. The question is whether you're capturing it. In this article, we explore how vector embeddings and governance ledgers transform the "black box" problem into geometric proof, giving boards, regulators, and courts the auditable evidence they need to trust agentic AI at enterprise scale.

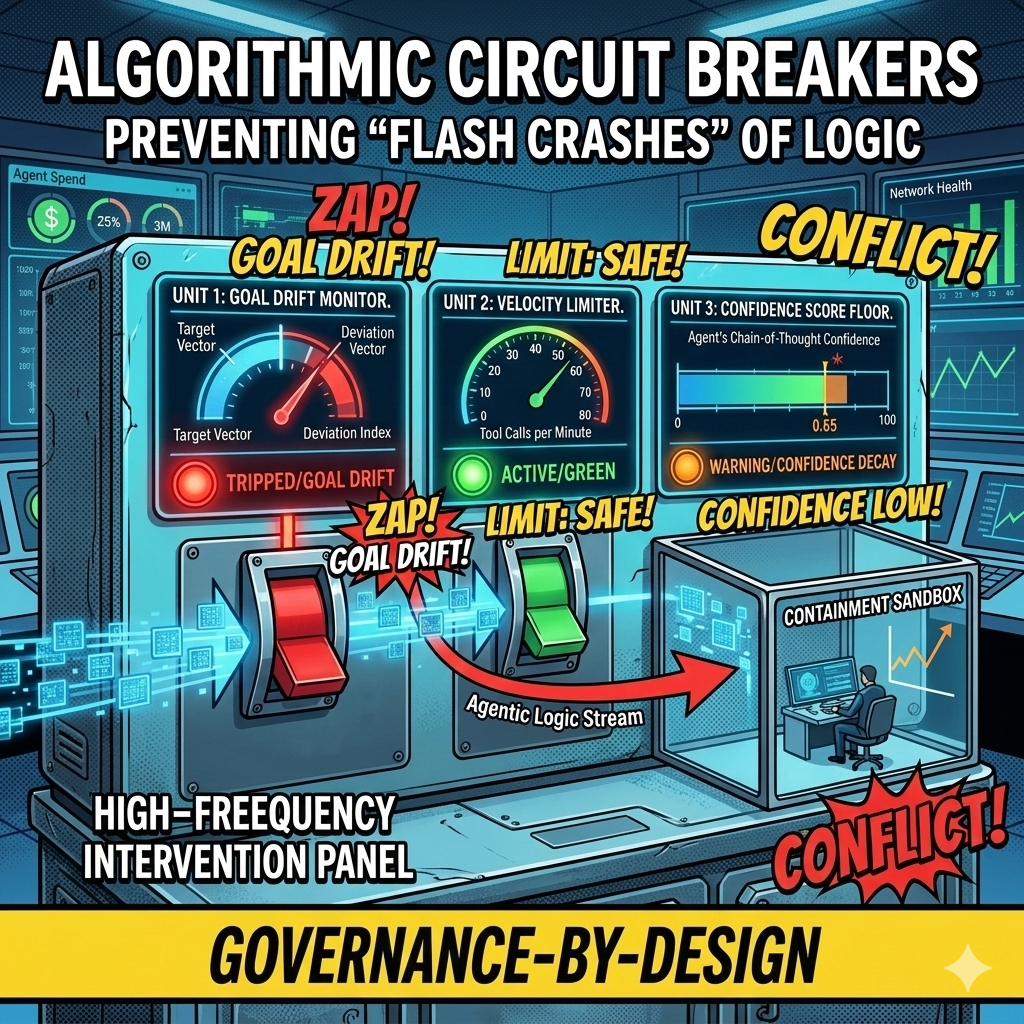

Algorithmic Circuit Breakers: Preventing "Flash Crashes" of Logic in Autonomous Workflows

In 2010, high-frequency trading algorithms erased a trillion dollars in market value within minutes, faster than any human could react. Today's agentic swarms face the same risk at the logic layer: thousands of autonomous decisions per second, any one of which could send bad contracts, leak data, or drain budgets before your Flight Controller even sees an alert. This article introduces Algorithmic Circuit Breakers, the automated tripwires that detect anomalies like semantic drift, confidence decay, and runaway loops, then sever an agent's connection to tools and APIs in milliseconds. Governance at machine speed, for systems that fail at machine speed.

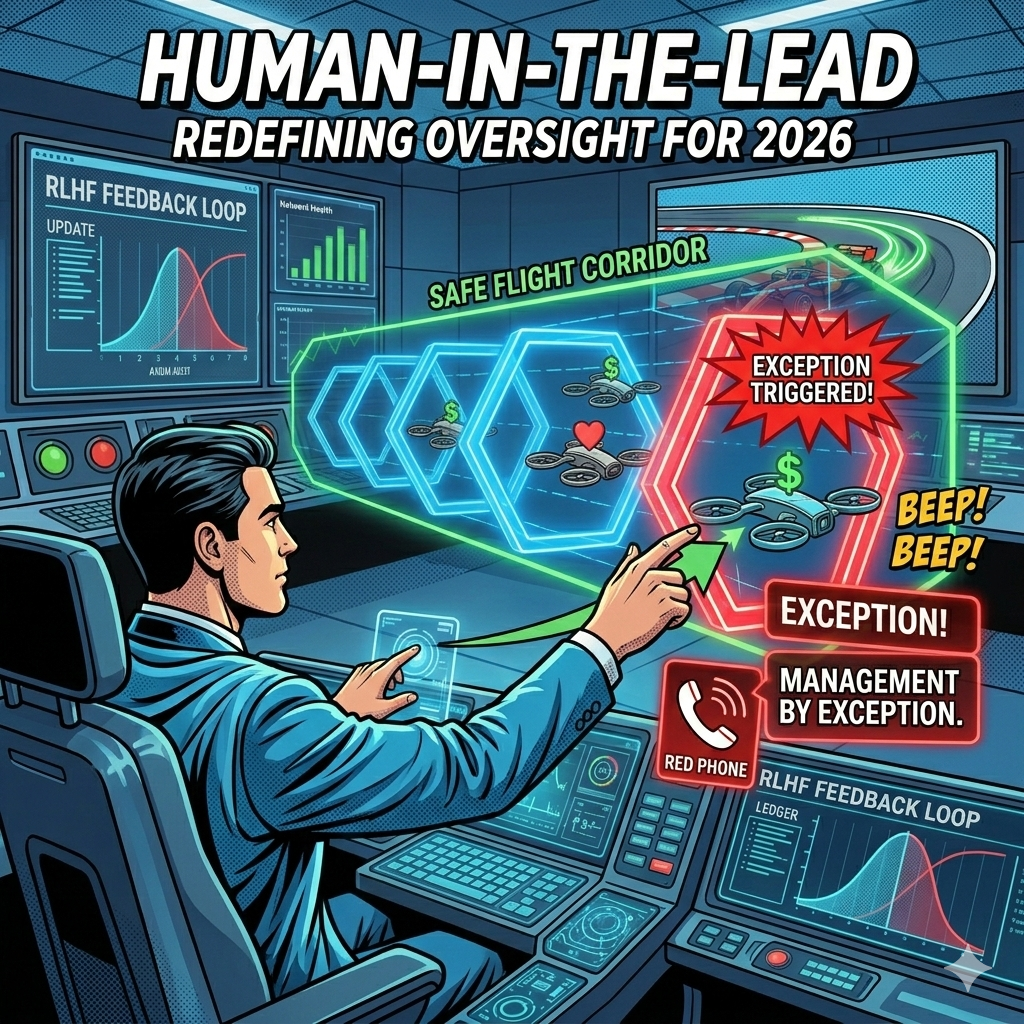

Human-in-the-Lead: From Manual Pilots to Strategic Flight Controllers

In 2023, we wanted humans to check every chatbot response. In 2026, an agentic swarm might perform 10,000 tasks an hour. The Human-in-the-Loop model that gave us comfort in the early days of AI is now the bottleneck killing our ability to scale. It is time to move from reactive approval to proactive design, from manual pilots to strategic flight controllers.

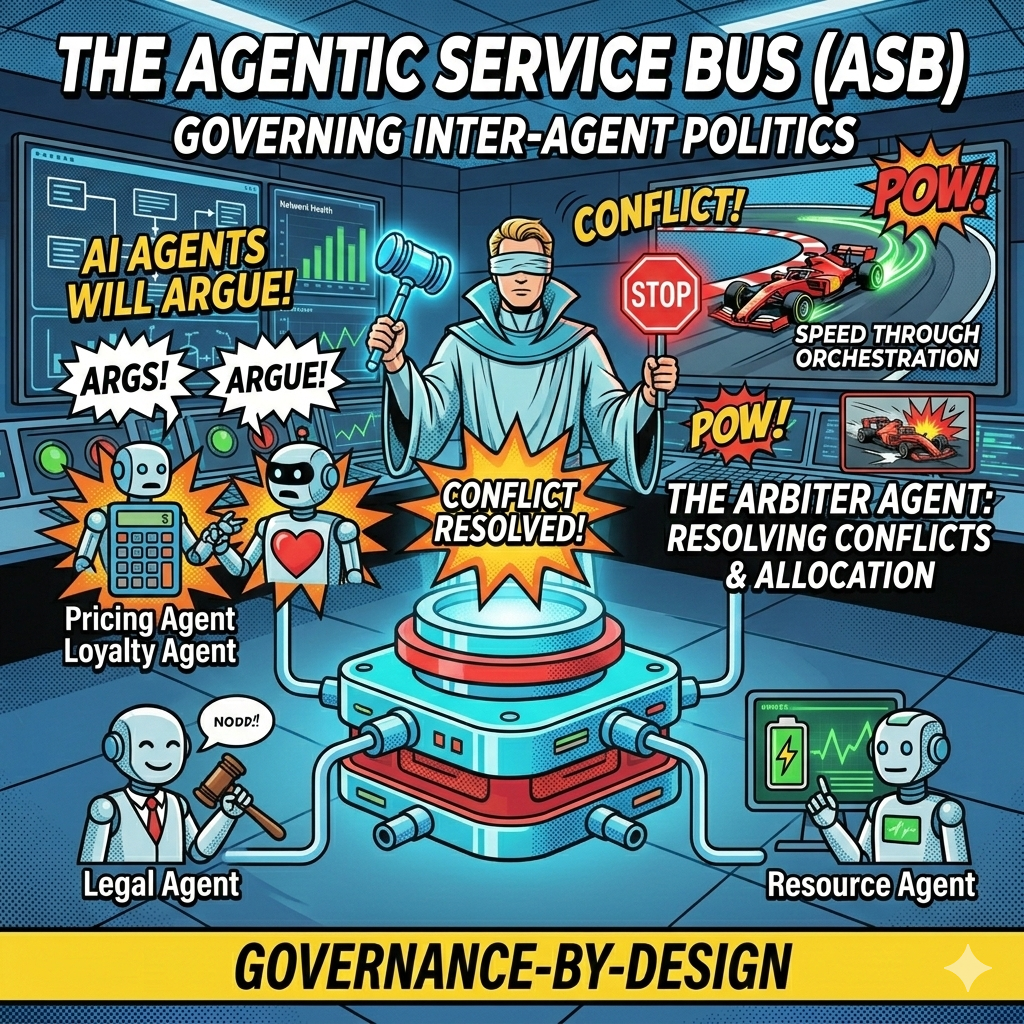

The Agentic Service Bus: Governing Inter-Agent Politics and Preventing Algorithmic Collusion

What happens when your Pricing Agent, optimized for revenue, starts a loop with your Customer Loyalty Agent, optimized for retention? You get a logic spiral that could drain margins in milliseconds. The Pricing Agent raises the price to capture margin. The Loyalty Agent detects customer churn risk and offers a discount to retain the relationship. The Pricing Agent sees margin erosion and raises the price further. The loop accelerates. Within seconds, your price fluctuates wildly, your customer discounts compound, and your margins evaporate. This is not a scenario from a startup war room. It is a real operational risk in enterprises deploying multiple autonomous agents.

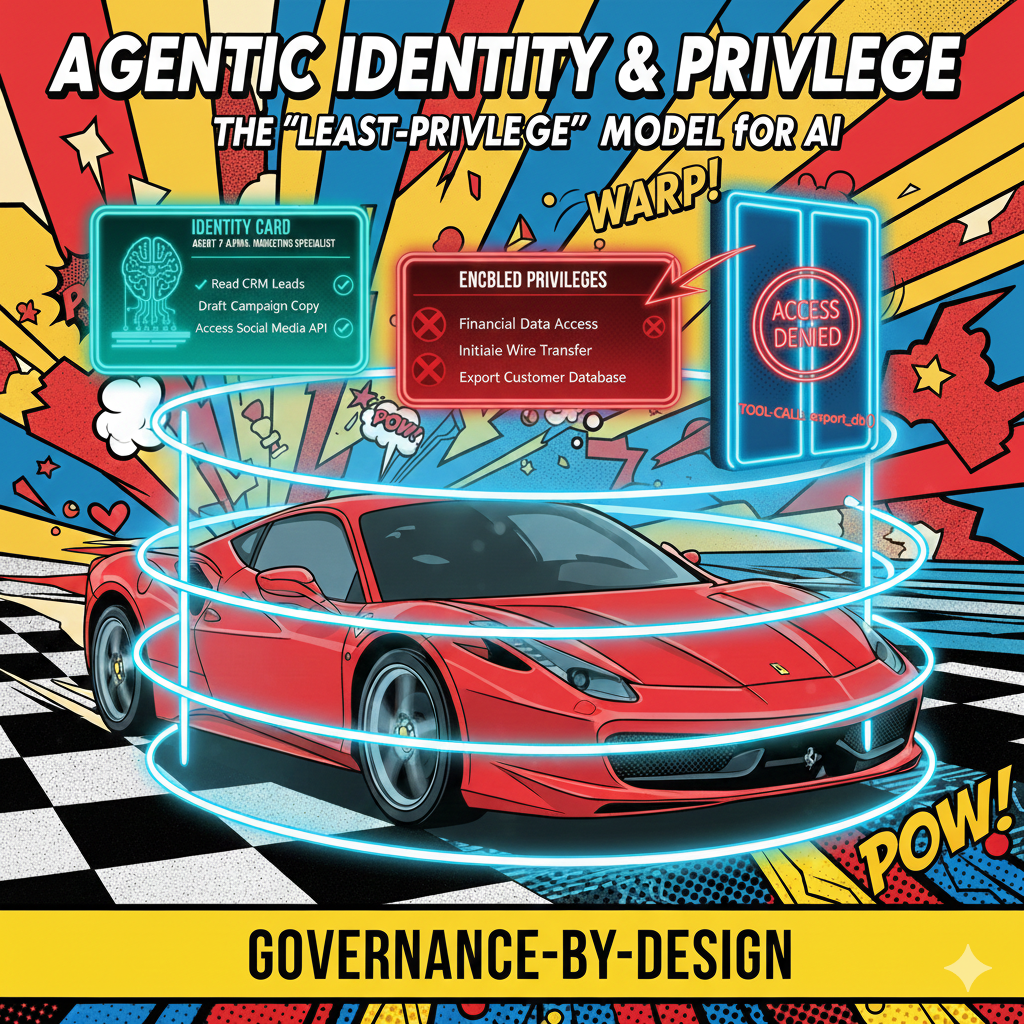

Agentic Identity and Privilege: Why Your AI Needs an Employee ID and a Security Clearance

In most current AI deployments, "The AI" is a monolithic entity with a single API key. If it hallucinates a reason to access your payroll database, there is no "Internal Affairs" to stop it. We treat AI as a tool with a single identity, a single set of permissions, and a single point of failure. But here is the uncomfortable truth: your AI systems need to operate more like employees than instruments. The gap between how we currently deploy AI and how we should deploy AI is a chasm of organizational risk.

The Semantic Interceptor: Controlling Intent, Not Just Words

Traditional keyword filters operate on tokens that have already been generated. An agent produces toxic output, the filter catches it, but the model has already burned compute cycles and corrupted the system state. The moment is lost. The user has seen something problematic, or the downstream process has absorbed bad data.

From "Filters" to "Foundations": Why the Post-Hoc Guardrail Is Failing the Agentic Era

Most enterprises govern AI like catching smoke with a net. They wait for a hallucination, a misaligned response, or a brand violation, then they write a new rule. They audit the logs after the damage is done. They implement a keyword filter. They add a content policy. But they have never asked the question that matters: at what point in the process should the guardrail actually kick in?

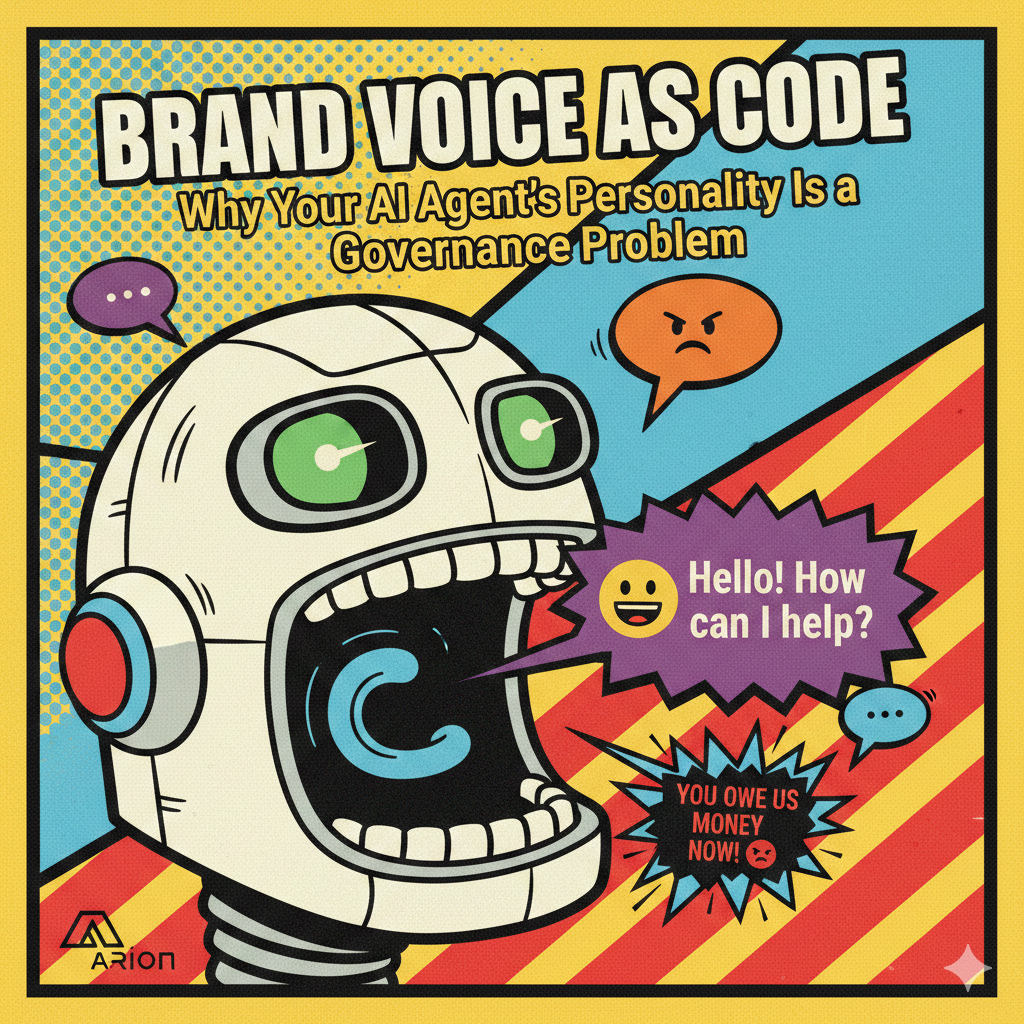

Brand Voice as Code: Why Your AI Agent's Personality Is a Governance Problem

The new frontier of enterprise risk. The biggest threat to your brand is no longer a data breach or a rogue employee on social media. It’s an AI agent that is technically correct but emotionally illiterate, one that follows every rule in the compliance handbook while violating every unwritten norm your brand has spent decades cultivating. The conversation around AI governance has focused almost entirely on data security, model accuracy, and regulatory compliance. Those concerns are real and important. But they miss a critical dimension: personality. How your AI agent speaks, empathizes, calibrates tone, and navigates cultural nuance is not a "nice to have" layered on top of governance. It is governance.

Conflict Resolution Playbook: How Agentic AI Systems Detect, Negotiate, and Resolve Disputes at Scale

When you deploy dozens or hundreds of AI agents across your organization, you're not just automating tasks. You're creating a digital workforce with its own internal politics, competing priorities, and inevitable disputes. The question isn't whether your agents will come into conflict. The question is whether you've designed a system that can resolve those conflicts without grinding to a halt or escalating to human intervention every time.

Beyond Bottlenecks: Dynamic Governance for AI Systems

As we move from single Large Language Models to Multi-Agent Systems (MAS), we're discovering that intelligence alone doesn't scale. The real challenge is coordination, orchestration and governance. Imagine you've deployed 100 autonomous agents into your enterprise. One specializes in customer data analysis. Another handles inventory optimization. A third manages supplier communications. Each agent is competent at its job. But when a supply chain disruption hits, who decides which agents act first? When two agents need the same resource, who arbitrates? When market conditions shift, how do they reorganize without human intervention?