Building the Agentic Enterprise, Part 3: Know Where You Stand; The Dual Maturity Framework

This is the third article in an 11-part series exploring what it takes to build an enterprise that runs on AI agents, not just AI tools. Each article examines a critical dimension of the journey and includes a "What It Takes" section with practical guidance for leaders navigating this transition.

---

The Problem with One-Dimensional Thinking

Most organizations approach agentic AI by asking a single question: what can the technology do? They evaluate platforms, assess model capabilities, and explore use cases. These are reasonable starting points. But they are only half the picture.

The question that gets far less attention, and the one that determines whether an agentic AI initiative succeeds or stalls, is: what can our organization support?

Technology capability without organizational readiness leads to failed deployments, compliance risks, and eroded trust. Organizational readiness without matching technology ambition leads to missed opportunities, wasted investment, and a widening gap against competitors who are moving faster.

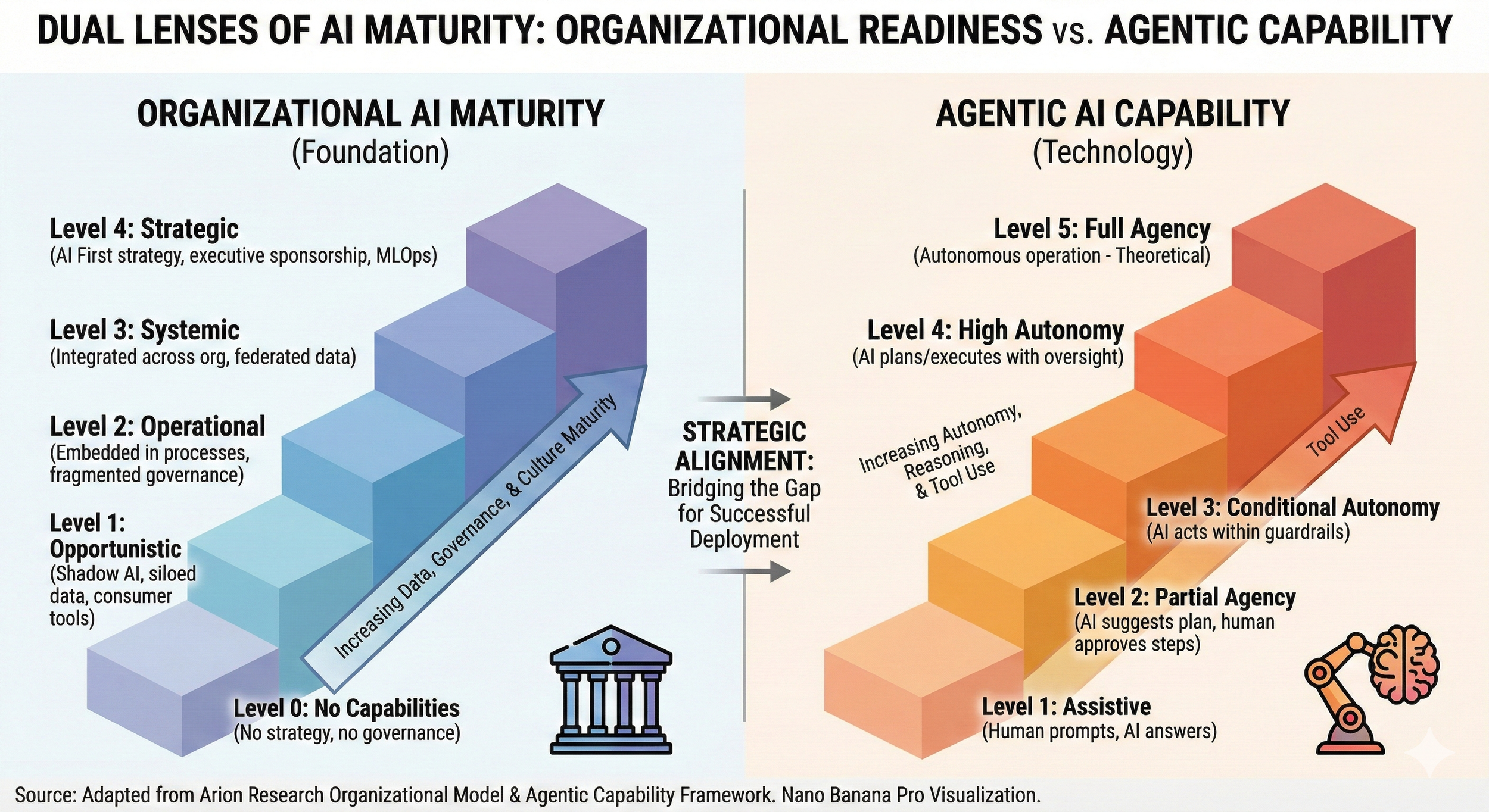

This is why a one-dimensional AI strategy fails. Whether you focus only on what the technology can do or only on what the organization needs, you end up with an incomplete picture and decisions that misfire. The Dual Maturity Framework, introduced in our research earlier this year, addresses this by mapping two dimensions simultaneously: how capable the AI is and how prepared the organization is to handle that capability. The alignment between these two dimensions is where strategy lives.

The First Dimension: Organizational AI Maturity

The first axis of the framework assesses your organization's readiness to support AI that acts with increasing independence. This is not a technology assessment. It evaluates your data infrastructure, governance structures, leadership engagement, workforce preparedness, and cultural adaptability.

We define five levels, starting from zero.

Level 0: No Capabilities. There is no formal AI strategy, no governance framework, and no coordinated approach to data management. Data sits in operational silos. There is no executive sponsorship and minimal AI literacy across the organization. This is the starting point for organizations that have not yet begun the journey, and it is where any autonomous deployment would be premature.

Level 1: Opportunistic. Individual teams are experimenting with AI tools on their own initiative, but there is no coordination, no formal policies, and no centralized oversight. This is the "shadow AI" stage that many organizations pass through. It produces localized wins but also ungoverned risk: tools making decisions with unvetted data, potential compliance exposures, and duplicated effort across teams that do not know what the others are doing.

Level 2: Operational. The organization has moved from ad hoc experimentation to deliberate deployment for defined purposes: summarization, routing, report generation, and similar productivity applications. Some governance is in place, but it may be fragmented across business units. Data quality has improved in the areas where AI is deployed, but an enterprise-wide data strategy is still incomplete. The organization can support AI that proposes and assists, but its infrastructure and policies are not yet mature enough for agents that operate across organizational boundaries.

Level 3: Systemic. This is a significant inflection point. AI is integrated across organizational boundaries, with agents operating in workflows that span multiple functions. This requires a federated data strategy, one that is governed consistently but accessible enterprise-wide. Governance is comprehensive, with clear policies on AI decision-making authority, escalation protocols, and monitoring. Cross-functional teams manage deployments, and the organization has invested in AI literacy at every level.

Level 4: Strategic. AI is a core component of how the organization designs work. Governance is embedded into the AI development lifecycle rather than applied as an afterthought. Executive sponsorship is active and informed. Data infrastructure provides real-time, enterprise-wide access with robust quality controls. The workforce is skilled in AI collaboration, and the culture embraces continuous adaptation. This organization is prepared for highly autonomous agents because the organizational scaffolding is already in place.

The Second Dimension: Agentic AI Capability

The second axis assesses how much autonomy the AI system exercises. In Part 2, we introduced the autonomy spectrum. Here, we connect each level to the organizational requirements it creates.

Level 1: Assistive. The AI responds to direct human prompts and provides single-turn outputs. A user asks a question and gets an answer. There is no autonomous action, no independent planning, and no persistent context between interactions. This is where most generative AI tools operate today, and the organizational requirements are relatively modest.

Level 2: Partial Agency. The AI can analyze a situation and propose a plan of action, but a human must approve every step before it proceeds. For example, an AI system might review a queue of support tickets, categorize them by urgency and complexity, and propose routing decisions, but a human confirms each one. The AI adds value through analysis and recommendation while the human retains decision authority at every stage.

Level 3: Conditional Autonomy. The AI operates independently within defined guardrails, executing tasks and making decisions on its own as long as conditions remain within established parameters. When something falls outside those boundaries, it escalates to a human. The organizational requirements increase significantly here: you need well-defined guardrails, robust escalation protocols, and monitoring systems that can verify the agent is staying within its boundaries.

Level 4: High Autonomy. The AI executes complex, multi-step workflows with minimal human intervention. It coordinates across systems, adapts its approach based on changing conditions, and handles exceptions within broad operational parameters. Human oversight shifts from real-time supervision to periodic audits and performance reviews. This level demands sophisticated monitoring infrastructure because humans are no longer watching in real time.

Level 5: Full Agency. The AI is capable of extended autonomous operation and self-directed goal-setting. This level is largely aspirational today. The governance, trust, and verification infrastructure needed to support full agency in enterprise environments is still developing. We include it to provide a complete picture of the spectrum and to help organizations plan for what is coming.

The Matching Matrix: Where Strategy Meets Reality

The core value of the framework lies in the alignment between the two axes. The principle is straightforward: the autonomy level of your AI should not exceed the maturity level of your organization.

An organization at Level 0 or Level 1 maturity should limit itself to Level 1 (Assistive) AI. Without governance, data infrastructure, or a coordinated strategy, the organization cannot safely support any autonomous action. AI should be limited to prompted, single-turn interactions.

An organization at Level 2 maturity can support Level 2 (Partial Agency) AI. There is enough governance and data quality for AI that proposes actions, but human approval is still required at each step. The governance infrastructure can handle review-and-approve workflows but not unsupervised execution.

An organization at Level 3 maturity can support Level 3 (Conditional Autonomy) AI. Cross-functional integration, federated data access, and comprehensive governance enable the definition and enforcement of guardrails. Escalation protocols are mature enough to handle boundary cases reliably.

An organization at Level 4 maturity can support Level 4 (High Autonomy) AI. Embedded governance, real-time monitoring, executive sponsorship, and enterprise-wide data infrastructure can support agents operating complex workflows with minimal oversight. Periodic audits replace real-time supervision.

Notice there is no recommended organizational pairing for Level 5 autonomy. Full agency requires trust, verification infrastructure, and governance sophistication that does not yet exist at scale in enterprise environments. That will change over time, but today, Level 5 sits in the planning horizon, not the deployment roadmap.

This matrix is not theoretical. When organizations align their AI ambitions to their organizational readiness, deployments succeed more consistently, scale more smoothly, and build the confidence needed to advance further. When they do not, they run into one of two failure modes.

The Two Failure Modes

Overshooting: The High-Risk Zone. Overshooting happens when an organization deploys AI agents with autonomy levels that exceed its organizational maturity. The classic case is a Level 1 organization attempting to deploy Level 4 agents.

The consequences are predictable and painful. Agents operate without clear boundaries because no governance framework defines their decision authority. They work with incomplete or inconsistent information because there is no integrated data infrastructure. Problems compound before anyone detects them because there is no monitoring infrastructure to provide visibility.

The failures tend to be dramatic: compliance violations, customer-facing decisions made on bad data, cascading automated actions that no one can explain or reverse. And the damage extends beyond the immediate incident. Overshooting erodes trust, both internally and externally, and often triggers an overcorrection that shuts down AI initiatives entirely. We have seen organizations set their agentic AI efforts back by years because a premature deployment went wrong and leadership concluded the technology was not ready, when in truth it was the organization that was not ready.

Undershooting: The Lost-Value Zone. Undershooting is the opposite problem: a mature organization deploying AI well below what its infrastructure, governance, and culture can support. A Level 4 organization using only Level 1 assistive tools is leaving enormous value on the table.

This failure mode is particularly insidious because it does not produce visible crises. No one gets fired for undershooting. There are no compliance incidents, no public embarrassments, no dramatic failures. Instead, the damage shows up as a slow erosion of competitive position. The organization has invested in infrastructure, governance, and culture but is not capturing a return on that investment. Knowledge workers remain burdened with tasks that agents could handle. Competitors with similar maturity but more autonomous agents gain advantages in efficiency, speed, and scale.

By the time the gap becomes apparent, the window for catching up may have narrowed. Undershooting is the quiet failure, and it is just as costly as overshooting over time.

A Multi-Year Journey, Not a Single Decision

The Dual Maturity Framework is not a one-time assessment. It is a strategic planning tool for what will inevitably be a multi-year journey. Moving from Level 1 to Level 3 organizational maturity typically requires 18 to 36 months of sustained investment in data infrastructure, governance, workforce development, and cultural change.

The practical implication is that you should advance both dimensions in concert. As your data infrastructure improves, deploy agents that can use that data within your current governance boundaries. As your governance matures, expand the autonomy of your agents to match. Each step forward on one axis creates the conditions and the confidence to take the next step on the other.

This is where the framework becomes most valuable as a planning tool. Rather than asking "What agents should we deploy?" in isolation, you can ask: "Given where we stand on organizational maturity, what level of agent autonomy can we responsibly support today? And what do we need to build on the organizational side to support the next level?"

That second question turns the framework from a diagnostic into a roadmap. It makes visible the specific investments, in data, governance, workforce readiness, and process design, that are prerequisites for advancing your agentic capabilities. And it prevents the all-or-nothing thinking that stalls so many initiatives: you do not have to leap from where you are today to full autonomy. You can progress deliberately, building capability and confidence at each stage.

What It Takes: Honest Self-Assessment

The readiness question at the heart of this article spans all six dimensions of our Agentic AI Readiness Assessment: Strategic Alignment, Technical Infrastructure, Data Readiness, Process Maturity, Governance and Risk Management, and Workforce Readiness. Each of these dimensions maps to the organizational conditions that determine what level of AI autonomy your organization can support.

Here is what honest self-assessment requires in practice:

Know where you stand on each dimension. You need a clear-eyed view of your current state across all six areas. Where is your data infrastructure? How mature is your governance? How ready is your workforce? The answers will not be uniform. Most organizations are further along on some dimensions than others, and those gaps are important to identify because they define where to invest next.

Resist the temptation to overrate your readiness. This is harder than it sounds, especially in organizations where there is pressure to appear innovative or where leadership has publicly committed to AI transformation. The Dual Maturity Framework only helps if the self-assessment is honest. An inflated view of your organizational maturity leads directly to overshooting.

Look for the gaps between dimensions. An organization might have strong data infrastructure but weak governance, or excellent executive sponsorship but limited workforce readiness. These asymmetries matter. The dimension where you are weakest sets the ceiling for what level of agent autonomy you can safely support. Identifying the bottleneck dimension tells you where the highest-return investment lies.

Use the framework to build a sequenced plan. Once you know where you stand and where the gaps are, you can build a phased plan that advances both dimensions in coordination. Phase 1 might focus on closing your most critical readiness gap while deploying agents at the autonomy level your current maturity supports. Phase 2 expands autonomy as the organization catches up. This sequenced approach is more effective than trying to move everything forward at once.

Make assessment ongoing, not one-time. Both the technology landscape and your organizational capabilities are evolving. The alignment that is right today may not be right in six months. Build periodic reassessment into your operating rhythm so you can adjust as conditions change.

To help readers put this framework into practice, we are building a companion Dual Maturity Quick Diagnostic tool that will be available at arionresearch.com. The diagnostic is a brief self-assessment, roughly 10 questions, that plots your organization on the Matching Matrix and provides an initial reading of whether you are aligned, overshooting, or undershooting. It is a starting point, not a comprehensive evaluation. For organizations that want a deeper assessment, our full Agentic AI Readiness Assessment provides detailed evaluation across all six dimensions, and our AI Blueprint offering translates that assessment into a concrete implementation roadmap.

Up Next

In Part 4, we will shift from frameworks to use cases: where agents create real business value today. Organized by business function, from finance and operations to customer service and IT, Part 4 helps you identify the high-value opportunities in your own organization and understand what makes certain workflows better candidates for agentic AI than others. If you have been waiting for the practical "where do we start?" guidance, that is the next article.